Google DeepMind has announced Gemini Robotics-ER 1.6, an upgraded reasoning model designed to empower robots with advanced spatial and physical reasoning. This release focuses on giving robots the ability to understand and interact with their environments through improved visual perception, spatial awareness, and complex task planning. Robots using this model can now interpret analog instruments such as pressure gauges and sight glasses, a feature developed in collaboration with Boston Dynamics. The model also supports the execution of tasks by integrating with tools like Google Search and third-party functions, positioning it as a key reasoning layer for autonomous agents.

We’re rolling out an upgrade designed to help robots reason about the physical world. 🤖

— Google DeepMind (@GoogleDeepMind) April 14, 2026

Gemini Robotics-ER 1.6 has significantly better visual and spatial understanding in order to plan and complete more useful tasks. Here’s why this is important 🧵 pic.twitter.com/rxT1lkYZZB

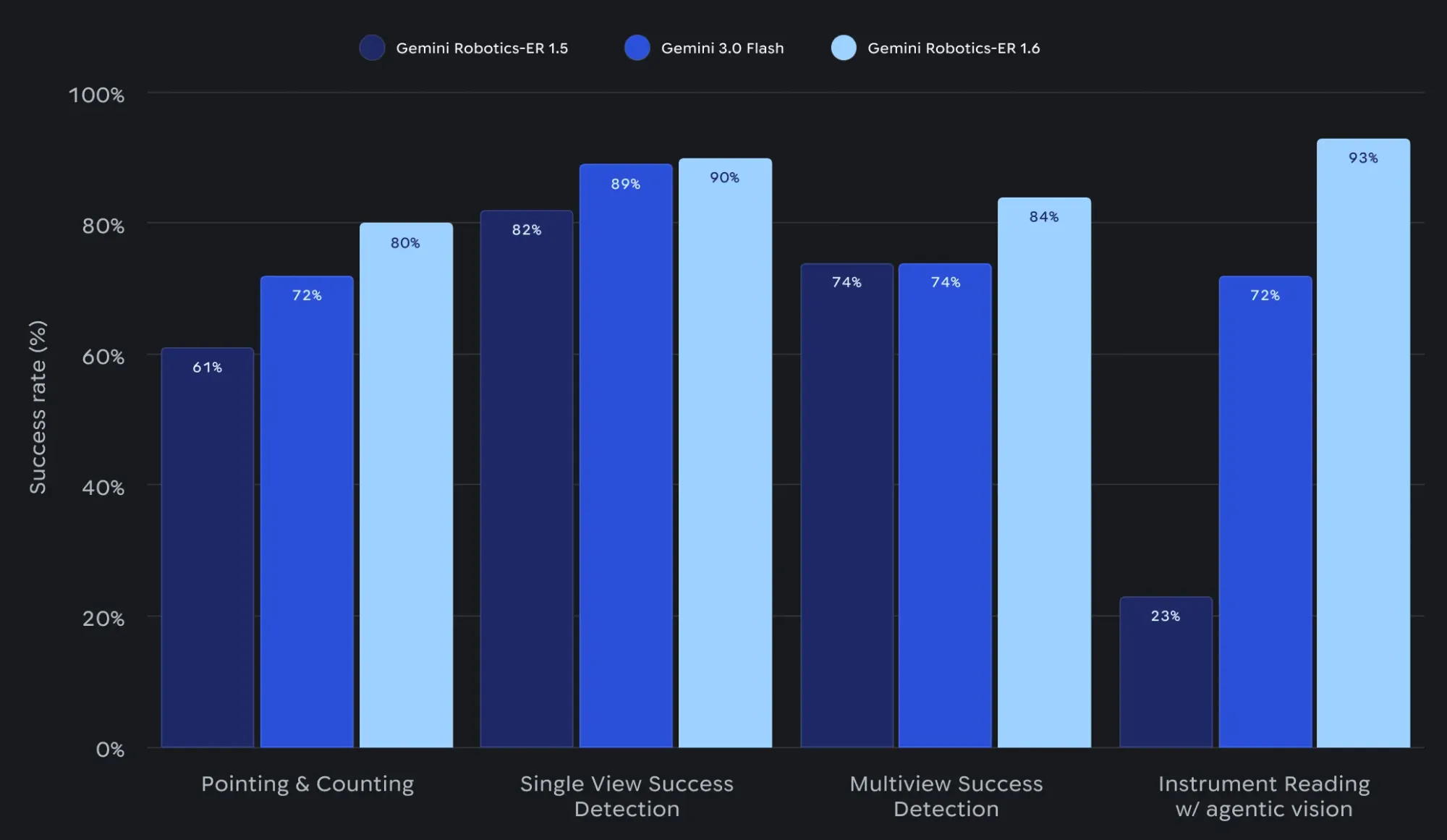

Gemini Robotics-ER 1.6 is available immediately to developers through the Gemini API and Google AI Studio, with sample code to assist integration. The initial rollout targets robotics developers and research teams aiming to build or upgrade physical agents for industrial, commercial, or research settings. This version brings notable improvements compared to Gemini Robotics-ER 1.5 and Gemini 3.0 Flash, particularly in areas such as accurate pointing, counting, and success detection for physical tasks. Early feedback from robotics researchers highlights the model’s expanded capabilities and its potential to address previously challenging tasks in real-world environments.

Google DeepMind’s continued focus on embodied reasoning underscores its ambition to set new standards for robotic autonomy. The company leverages its expertise in AI and robotics to address complex physical reasoning challenges, aiming to accelerate the development and deployment of intelligent agents that operate seamlessly in both digital and physical spaces.