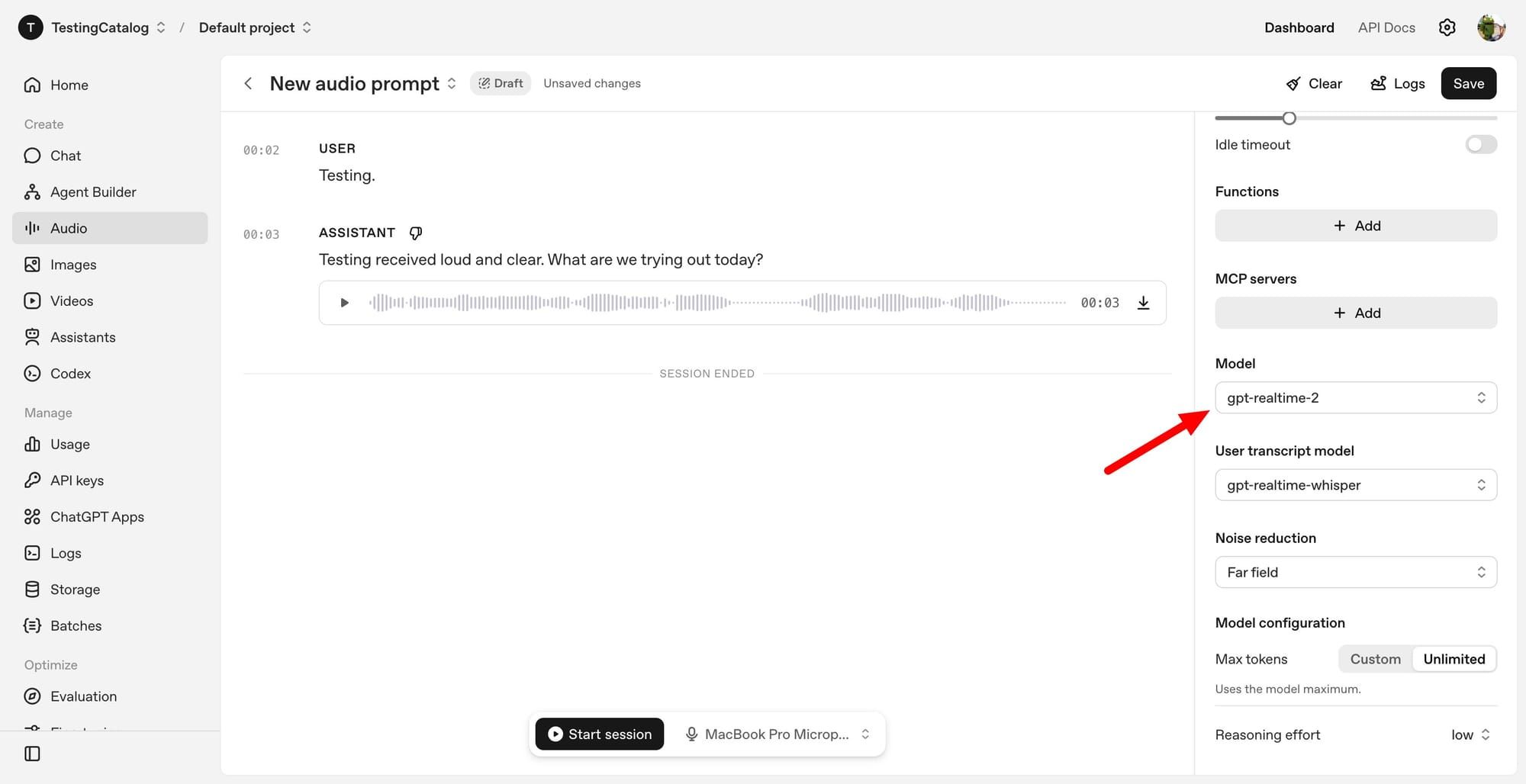

OpenAI is advancing its voice AI capabilities within its API platform by introducing three new real-time audio models designed for developers creating live voice agents, translation tools, and streaming transcription products. The release includes GPT-Realtime-2, GPT-Realtime-Translate, and GPT-Realtime-Whisper, all accessible through the Realtime API.

GPT-Realtime-2 is the primary agentic voice model in this lineup. OpenAI claims it offers GPT-5-class reasoning for spoken conversations, enabling voice agents to tackle more complex requests, manage context, utilize tools, respond to corrections, and maintain a conversation without reverting to simple call-and-response behavior. The model supports parallel tool calls, short spoken preambles like “let me check that,” improved recovery behavior when a task fails, and a larger 128K context window, an increase from the previous generation's 32K.

Introducing GPT-Realtime-2 in the API: our most intelligent voice model yet, bringing GPT-5-class reasoning to voice agents.

— OpenAI (@OpenAI) May 7, 2026

Voice agents are now real-time collaborators that can listen, reason, and solve complex problems as conversations unfold.

Now available in the API… pic.twitter.com/2DY1LU2vO8

Developers have more control over reasoning effort, with settings ranging from minimal to xhigh. Low is the default, while higher settings are intended for more intricate voice tasks where reasoning depth is prioritized over latency. OpenAI reports that GPT-Realtime-2 demonstrates improvements over GPT-Realtime-1.5 in audio intelligence, instruction adherence, context management, and live conversation control.

GPT-Realtime-Translate is designed for live multilingual voice products. It supports speech input in over 70 languages and output in 13 languages, enabling developers to create tools for customer support, cross-border sales, education, events, creator platforms, and media localization. The model is engineered to keep up with speakers while managing regional pronunciation, context shifts, and domain-specific terminology.

GPT-Realtime-Whisper offers streaming speech-to-text capabilities to the API. It transcribes audio as people speak, making it ideal for live captions, meeting notes, classroom tools, broadcasts, customer support workflows, healthcare documentation, recruiting, and sales calls where speech needs to be converted into structured text during the conversation, not afterward.

The target audience includes developers and businesses building voice-first products rather than general ChatGPT users. Early use cases identified by OpenAI include Zillow for real estate voice agents, Deutsche Telekom for multilingual support, Priceline for travel assistance, Vimeo for live video translation, and other companies focusing on customer service, enterprise search, healthcare, and AI assistant workflows.

Pricing for all three models is now available. GPT-Realtime-2 is priced at $32 per 1 million audio input tokens, $0.40 per 1 million cached input tokens, and $64 per 1 million audio output tokens. GPT-Realtime-Translate costs $0.034 per minute, while GPT-Realtime-Whisper costs $0.017 per minute. The models can be tested in OpenAI’s Playground and integrated into applications via the Realtime API.

The company behind this release, OpenAI, continues to expand its developer platform around multimodal AI, agents, and enterprise-ready APIs. This announcement focuses not on a new consumer app but on providing software teams with the infrastructure to integrate voice agents into products, support systems, travel apps, real estate tools, education platforms, and workplace software.