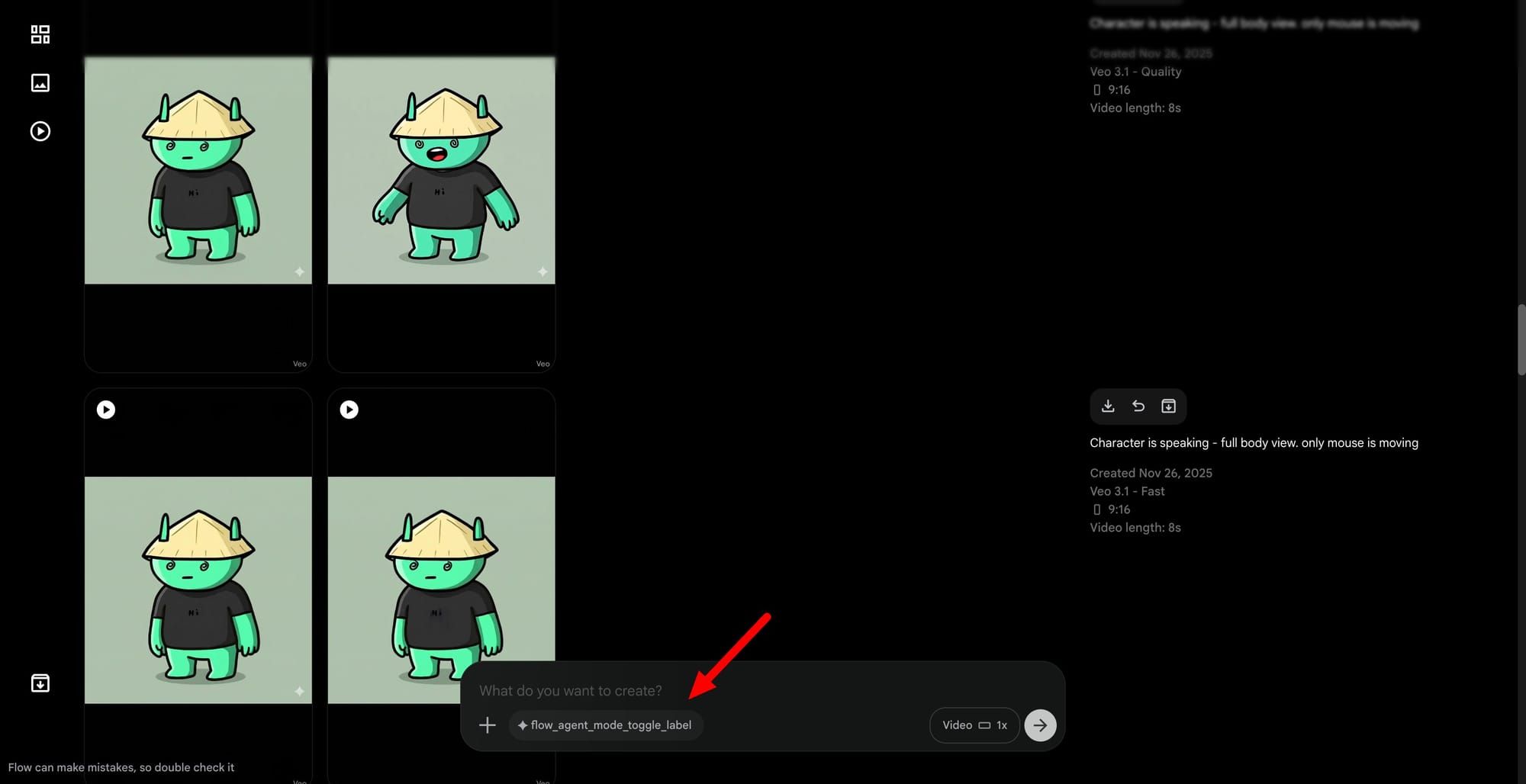

Google appears to be preparing an Agent Mode for Flow, the company's AI filmmaking tool built around the Veo model family. Build references uncovered in recent versions of the app point to a toggle that would sit directly on the prompt bar, letting creators switch the assistant on or off depending on whether they want to issue conventional generation prompts or hand off heavier orchestration to an automated helper. The functionality is not yet live, but the scaffolding suggests an ambitious rethink of how Flow projects come together.

According to traces left in the code, the assistant will be able to:

- Plan out scenes

- Discuss in-progress project changes

- Trigger generation workflows

- Manage both project-level and app-level creative tools

- Update the state of a project directly from a chat surface

In practice, this would let filmmakers describe an idea in conversation and watch the agent storyboard, queue clips, swap reference assets, and push the timeline forward without each step requiring a manual round trip through the editor.

The pattern mirrors what recently surfaced inside Google Stitch, where a similar agent layer was woven into the design tool to coordinate generation and iteration on the user's behalf. It also tracks closely with the agent mode that xAI shipped on Grok Imagine, which turned a single creative canvas into an orchestrator for multi-step image and video projects. Google's bet seems to be that across creative surfaces, design, video, and likely more, the long-term interface will be conversational rather than tool-by-tool.

Timing-wise, the I/O developer event on May 19 and 20 looks like a natural stage for this reveal, particularly with widespread expectations of a new Veo iteration and broader updates to the Gemini-powered creative stack. If both land together, Flow would gain a stronger underlying model and a chat-driven director at the same time, bringing video generation closer to a workflow where the human acts as the creative lead while the agent handles execution.