Mistral AI has launched Mistral Medium 3.5, a 128B parameter dense model with a 256k context window, now available in public preview. Developers and organizations can access it via Mistral Vibe CLI, Le Chat, or through the API, targeting Pro, Team, and Enterprise users globally. This release brings coding agents from local machines to the cloud, enabling parallel sessions that notify users upon completion and can be initiated or monitored from web or terminal interfaces. Sessions are isolated in sandboxes with support for broad code edits and can be teleported between local and cloud environments while preserving state and approvals.

Introducing remote agents in Vibe and Mistral Medium 3.5. You can now launch remote agents in the cloud, including from the CLI or Le Chat. Plus, new Work mode in Le Chat for complex, multi-step tasks. 🧵 pic.twitter.com/lX0Gz64Uut

— Mistral Vibe (@mistralvibe) April 29, 2026

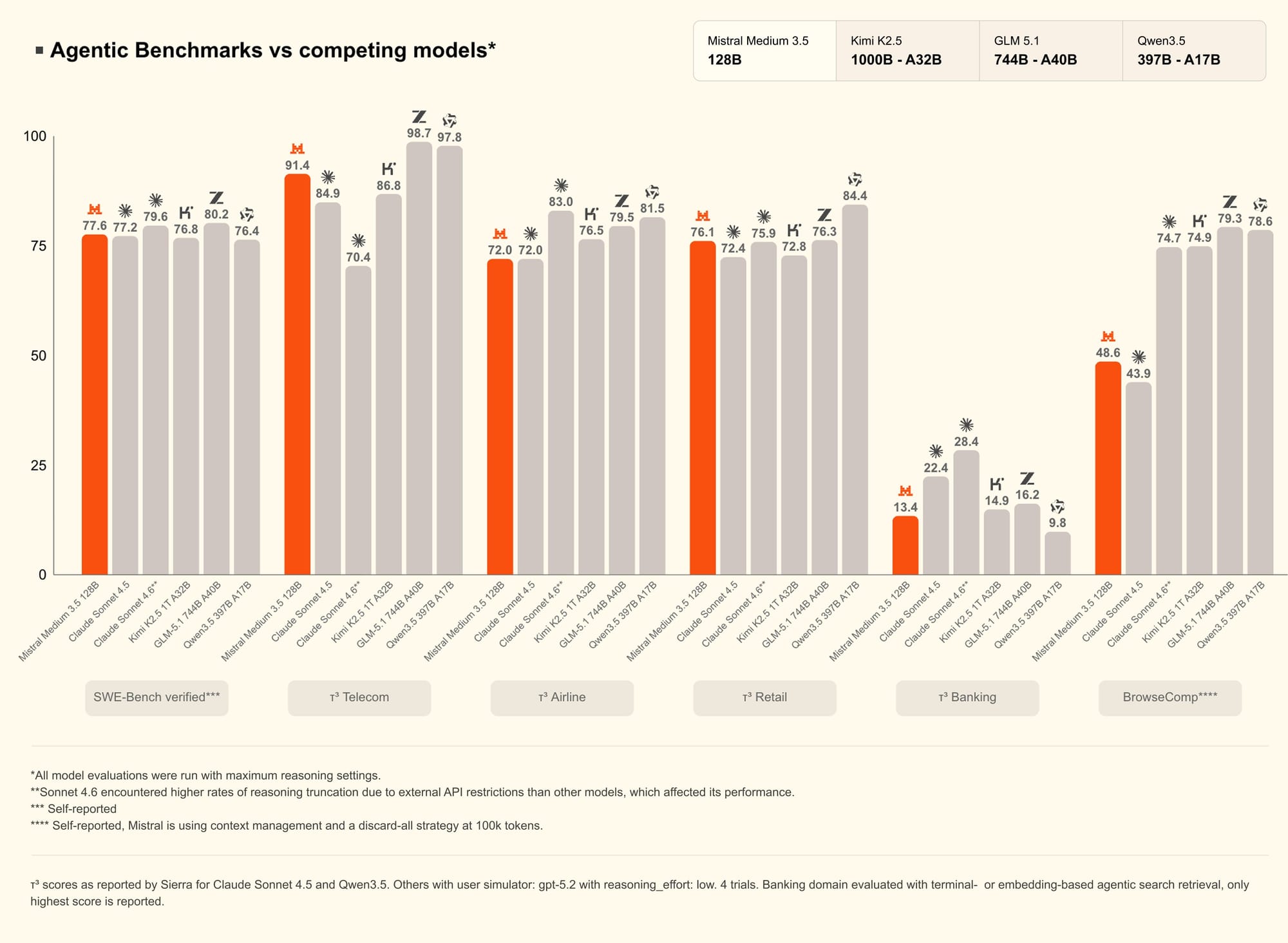

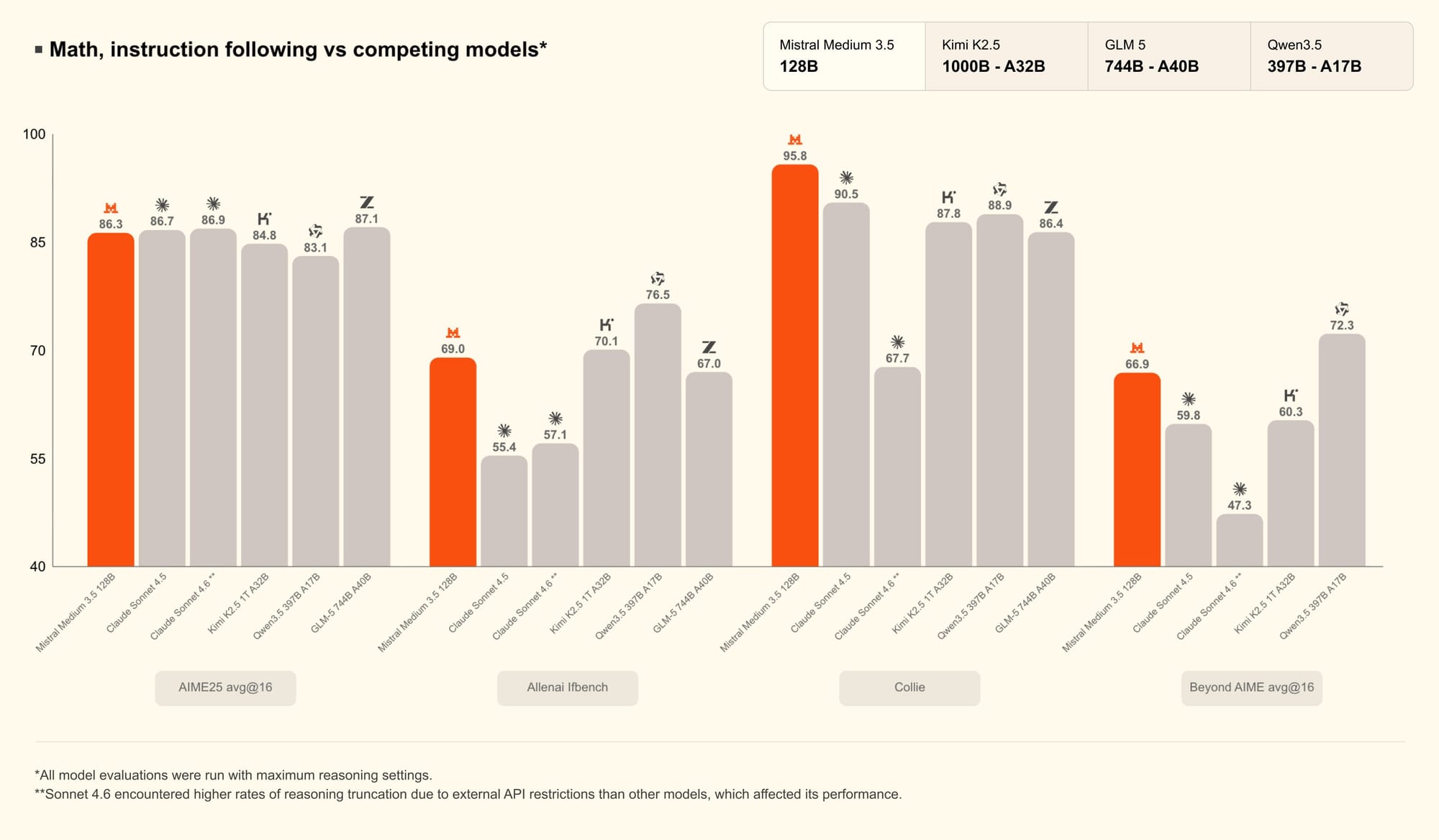

Mistral Medium 3.5 integrates instruction-following, reasoning, and coding in a single model, supporting variable image sizes through a newly trained vision encoder. It achieves 77.6% on SWE-Bench Verified and outperforms prior models like Devstral 2 and Qwen3.5 397B A17B in coding and agentic benchmarks.

The model's open weights are released under a modified MIT license, and it is deployable with as few as four GPUs, enabling both self-hosted and cloud-based use cases. Work mode in Le Chat leverages this model to execute multi-step, cross-tool workflows, handling complex research and productivity tasks while surfacing agent actions for user approval. Industry experts highlight the seamless cloud transition and the agent’s capacity for high-volume, well-defined coding work, with early users noting the improved workflow automation and transparency.

Last, but not least, don't sleep on this one: Le Chat now has Work mode (Preview) — a powerful agent for complex long-horizon tasks like research, analysis, and actions across your connected tools. Connectors are on by default so the agent pulls context from docs, email, and… pic.twitter.com/9H6Qjoft0r

— Mistral Vibe (@mistralvibe) April 29, 2026

Mistral AI, known for its rapid advancements in large language models and agentic tooling for developers, aims to make cloud-based autonomous agents accessible to a broader audience. This move positions the company as a strong competitor in cloud-based AI coding assistance, emphasizing scalability, transparency, and integration with widely used productivity tools.