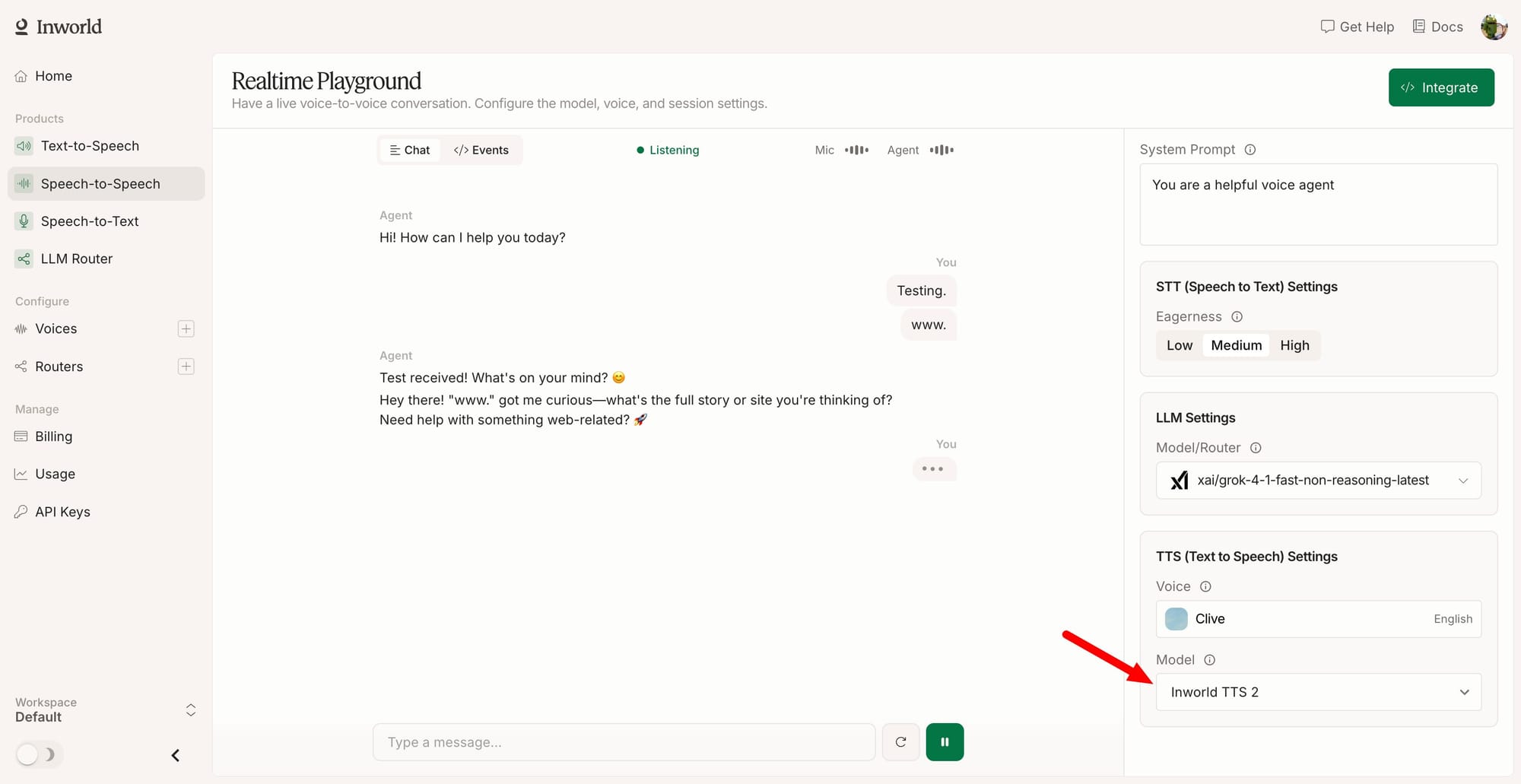

Inworld AI has released Realtime TTS-2, a voice synthesis model rebuilt from the ground up for live conversation rather than narration. Whereas previous generations of text-to-speech systems were designed for audiobooks and scripted voiceovers, TTS-2 is trained on conversational speech and reads the full audio context of a multi-turn exchange before generating a response. The model adapts to how someone sounds, not merely a text transcript of what they said.

Introducing Realtime TTS-2, a new generation of voice model built for realtime conversation.

— Inworld AI (@inworld_ai) May 5, 2026

It is the first voice model that hears the conversation, takes natural-language voice direction, holds one voice identity across over 100 languages, and speaks like a person who is… pic.twitter.com/8V75dEfjRV

Four capabilities that shape TTS-2:

- Conversational awareness: The model tracks tonal and emotional cues across an exchange and adjusts its delivery accordingly. A line delivered after a joke carries a different weight than the same line after bad news, and TTS-2 accounts for that distinction.

- Full voice direction: Developers can steer the model with natural-language descriptions instead of preset emotion tags, writing instructions like "tired but warm after a long day" the same way they would write a system prompt for a language model.

- Inline controls: These handle specific moments, such as whispers, sighs, and laughter, at precise timestamps.

- Crosslingual fluency: Covers over 100 languages with on-the-fly switching inside a single generation, preserving the speaker's voice identity across every language, so a language tutor sounds like the same person whether the session is in English, Japanese, or Spanish.

Latency sits at a sub-200ms median time-to-first-audio, which is the threshold required for consumer-scale voice agents to feel present rather than delayed.

TTS-2 is available now through the Inworld API and accessible via a live demo. Active integrations include Layercode, LiveKit, NLX, Pipecat, Vapi, and Voximplant. The model targets companion experiences, CX, support agents, interactive games, language-learning products, and AI roleplay or coaching scenarios where multi-turn emotional continuity and voice consistency across languages are operational requirements. The prior Inworld TTS generation already holds three of the top five positions on the Artificial Analysis Speech Arena, including the top ranking above Google and ElevenLabs.

Test out the demo for yourself at Inworld.ai!

Inworld AI is a research lab focused on real-time voice AI, founded by a team from DeepMind and Google with over $125 million raised from Lightspeed Venture Partners, Section32, Bitkraft, Kleiner Perkins, and Founders Fund. Its earlier models are already in production at scale, running inside Wishroll (which reached 1 million users within 19 days), Talkpal (with over 5 million learners), and Death by AI (with 20 million players). TTS-2 extends Inworld's core premise: real-time voice demands a model trained on the conversation itself, not one adapted from narration pipelines.