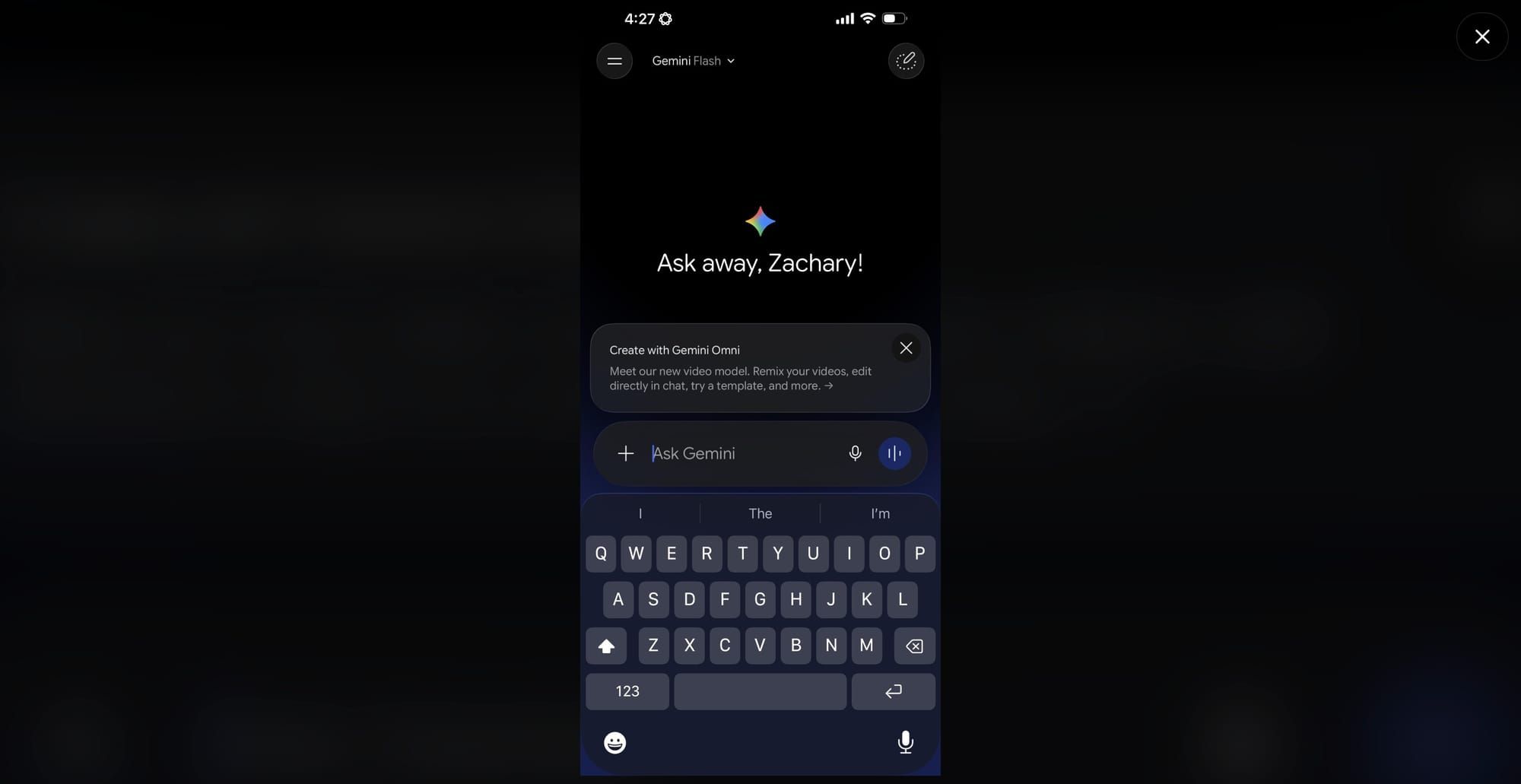

Fresh signals around Google’s upcoming Gemini Omni video model surfaced over the weekend, with Reddit users posting screenshots of a revised Gemini interface exposing the new model card. The description read “Create with Gemini Omni: meet our new video model, remix your videos, edit directly in chat, try templates, and more,” appearing to confirm the long-rumored unified approach Google has been preparing ahead of next week’s developer event. The rollout looked either accidental or part of a limited A/B test.

Sample video and early feedback 👀

— 🚨 AI News | TestingCatalog (@testingcatalog) May 11, 2026

> I won’t lie, this is one of the best video models I have seen, maybe not *the* best, but a really strong performance. I was particularly impressed by the prompt adherence (except for the one shot with the missing centerpiece), the model… pic.twitter.com/sG58DXlswL

Alongside the model card, users spotted a new usage limits tab inside settings, and several reported that video generation burned through credits fast, hinting at a metered system similar to what Google has been testing across Gemini surfaces. Early outputs drew mixed reactions. On raw generation fidelity, Omni appears to lag behind ByteDance’s Seedance 2, with viewers noting that the cinematic quality is a step behind the current benchmark leader. Where the model stood out was in editing: removing watermarks, swapping objects within clips, and rewriting scenes via chat instructions all worked unusually well for a first public glimpse.

GOOGLE 🔥: An upcoming Gemini Omni video model from Google is expected to be much more advanced in video editing, capable of completing tasks like removing watermarks, replacing objects in the video, and more.

— 🚨 AI News | TestingCatalog (@testingcatalog) May 11, 2026

It is also likely that Google will release 2 versions of this model,… https://t.co/OJUOBUXjOw pic.twitter.com/lT9sDlI8Lu

That pattern mirrors Nano Banana, which launched as a native image model on Gemini, debuted with middling generation scores but topped editing leaderboards, and was later upgraded into a frontier image system. Google appears to be running the same playbook for video, prioritizing modality unification under Gemini over raw quality leadership at launch. There are also hints that Omni will ship in tiered variants, likely Flash and Pro, with the outputs circulating now most likely coming from the Flash tier.

Google keeps preparing its upcoming Gemini Omni models for the release.

— 🚨 AI News | TestingCatalog (@testingcatalog) May 11, 2026

> Gemini Omni model will be available on APIs as well

> The model will be considered as Agent, similarly to Deep Research on AI Studio

Soon? 👀

P. S. Just a reminder that Nano Banana 1 wasn’t better than… pic.twitter.com/QnkbQ9WRQm

The timing fits neatly with Google I/O on May 19 and 20, where the company has a track record of unveiling its most ambitious AI shifts. A short pre-event window paired with a controlled leak gives Google room to gather reactions and shape the narrative before the keynote.