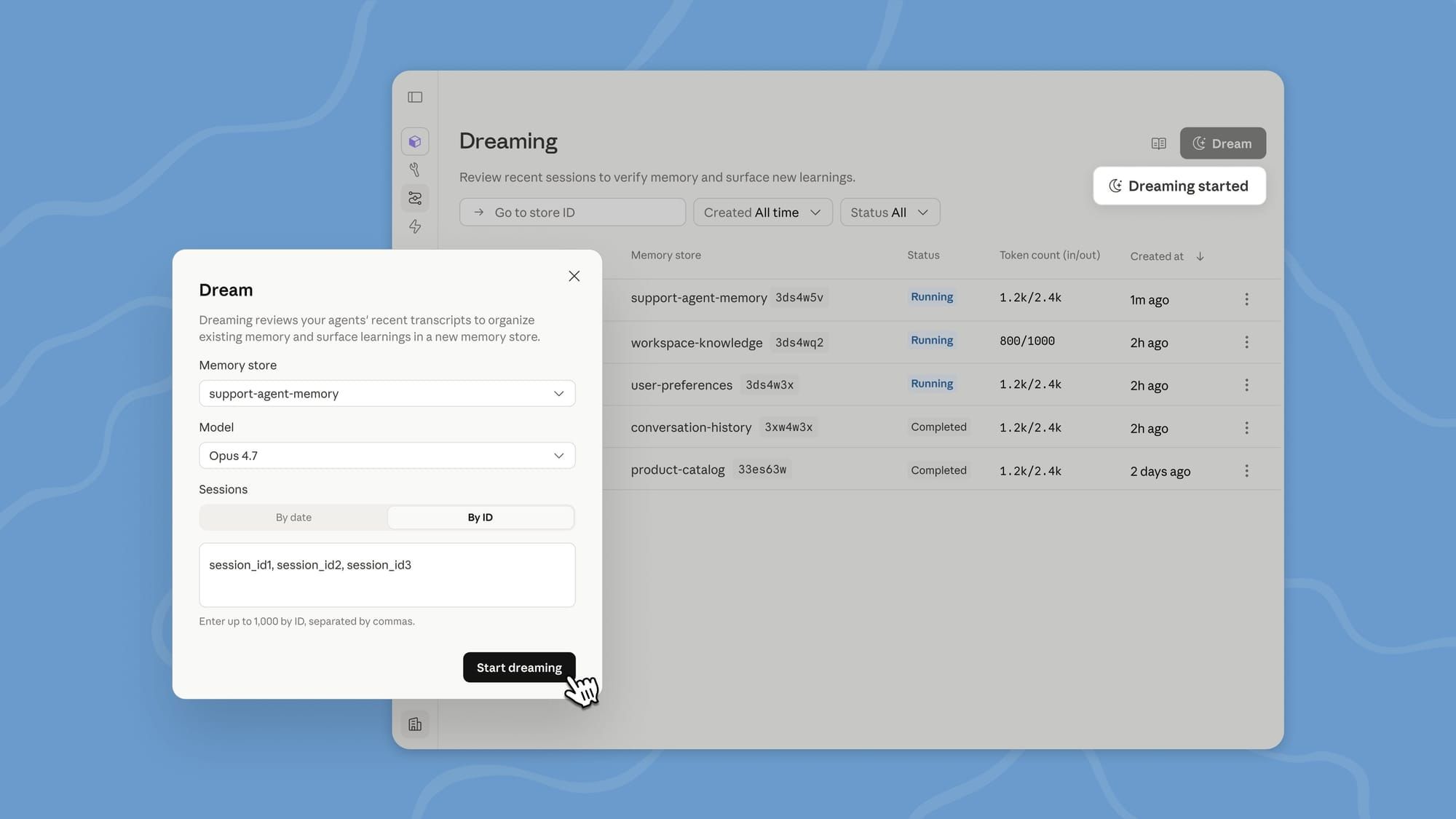

Anthropic has unveiled a research preview of "dreaming" in Claude Managed Agents, a feature designed to help AI agents refine their performance by analyzing past sessions and curating memory for long-term improvement. This process involves scheduled reviews of agents’ memory stores to extract patterns, address recurring errors, and restructure stored information for sustained accuracy. Dreaming can be configured for either automatic updates or manual review, giving developers flexibility in managing agent learning cycles.

In Claude Managed Agents, we’ve added multiagent orchestration, an outcomes loop for rubric-driven self-improvement, dreaming for self-learning, & webhooks. pic.twitter.com/Ip4pgLaEsp

— ClaudeDevs (@ClaudeDevs) May 6, 2026

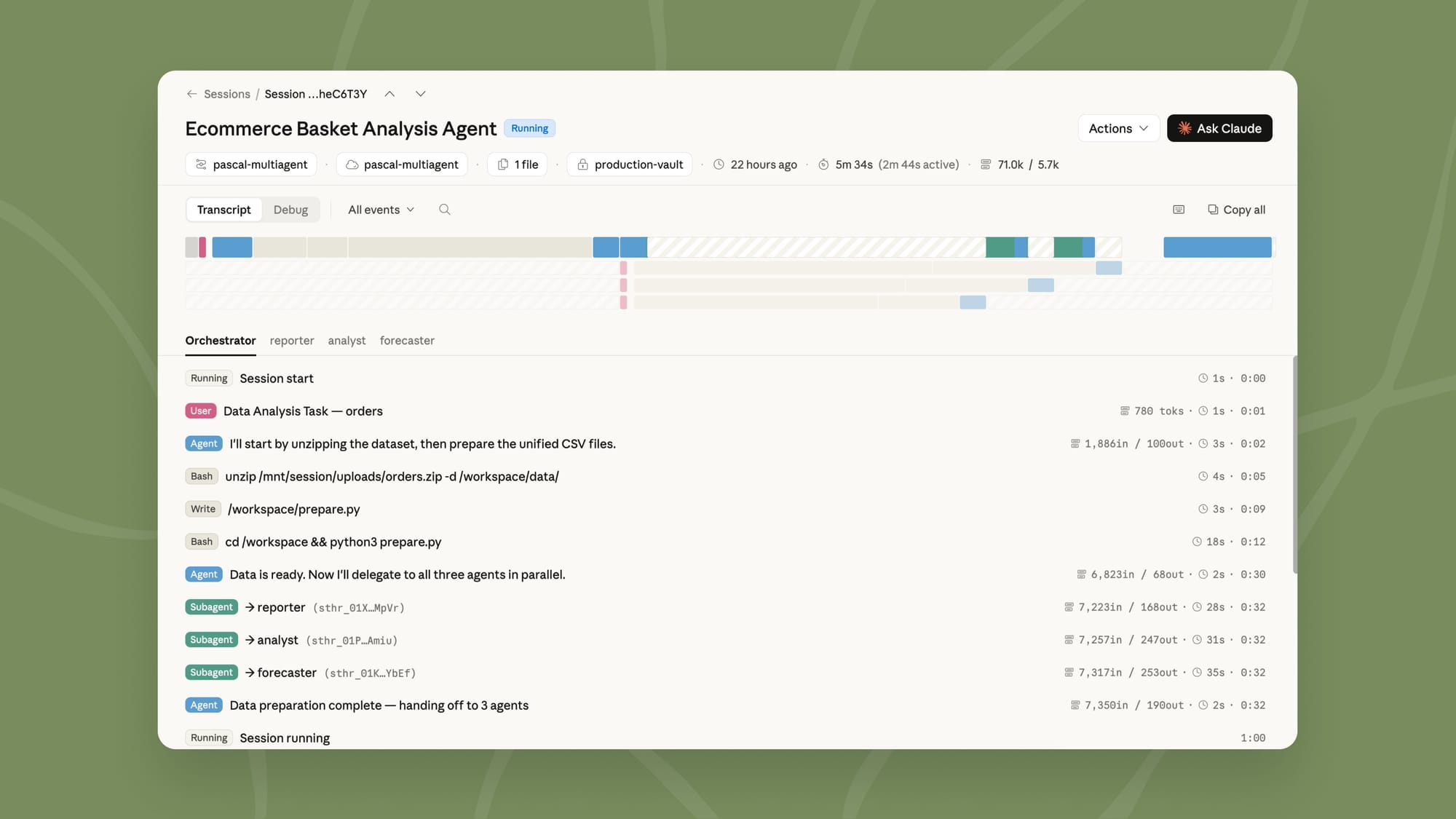

The release also includes outcomes, a system allowing developers to define explicit success criteria for agent tasks, and multiagent orchestration, which enables complex task delegation among specialized agents.

These capabilities are available via the Claude Platform to developers who request access, with use cases spanning:

- Legal drafting

- Large-scale log analysis

- Document quality checks

- Writing automation

Anthropic, the company behind Claude Managed Agents, is focused on advancing reliable and controllable AI systems. With these updates, the company aims to address the needs of enterprise developers seeking AI agents capable of handling intricate workflows autonomously. The new features differentiate Claude Managed Agents by offering granular memory control and structured task evaluation, setting them apart from previous iterations and many competing AI solutions.

Early adopters, including legal, tech, and content teams, have reported substantial improvements in task success rates and workflow efficiency, reflecting the product’s focus on robust, self-improving automation.