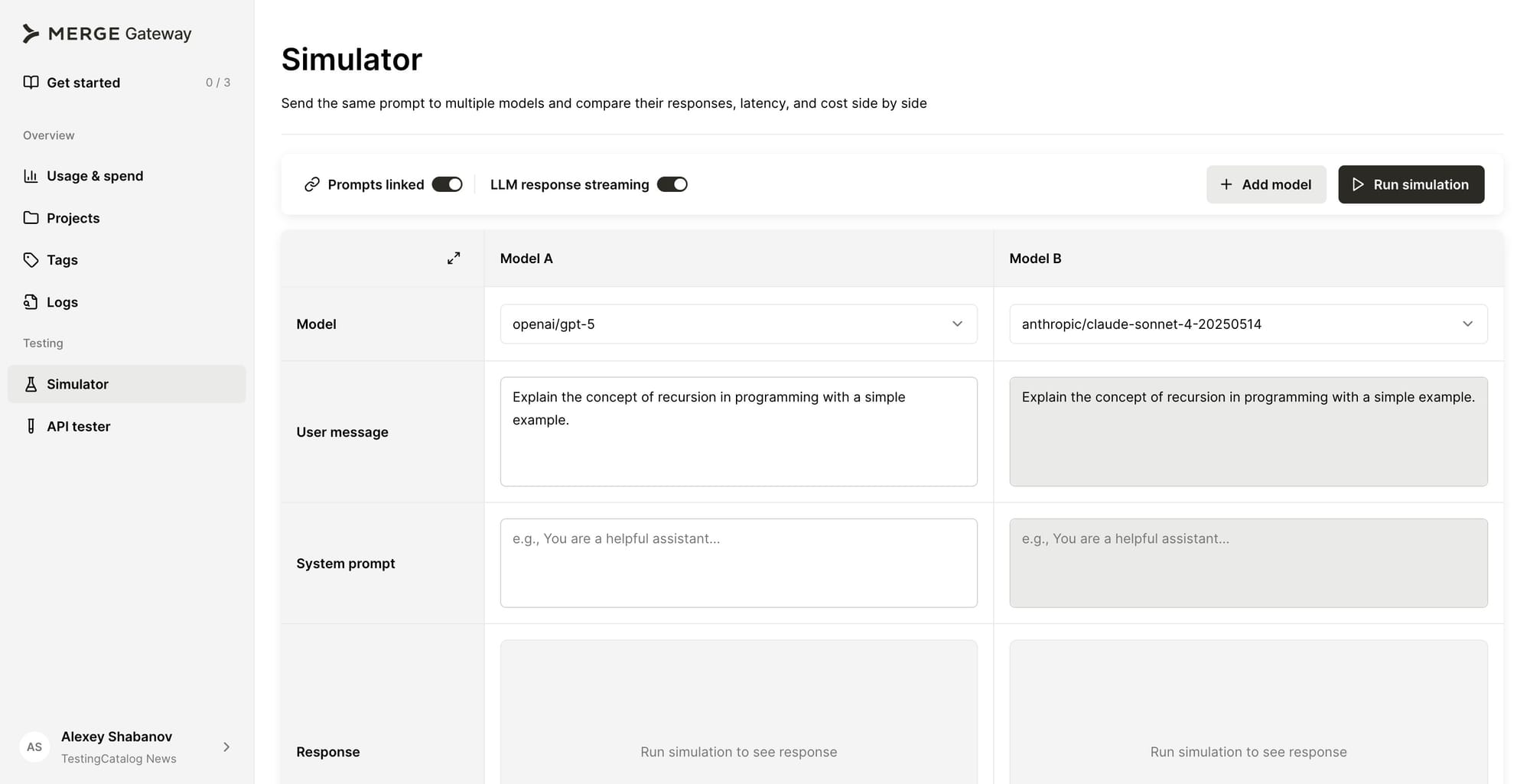

Merge is launching Gateway on March 31, a control plane aimed at engineering teams running language models in production. The product positions itself between an application and every LLM provider a team uses, exposing a single API endpoint while handling routing, fallback logic, spend governance, PII detection, and observability across all providers through a single integration.

The routing layer covers OpenAI, Anthropic, Google, Cohere, Mistral, Grok, and AWS Bedrock, with provider switching handled through configuration rather than code changes. Budget controls operate at the account, team, or per-customer level and trigger alerts before spend becomes a problem, replacing the pattern in which teams discover runaway costs after the invoice arrives.

API key management is centralised, removing the risk of credentials scattered across services with no audit trail over which models are receiving. Observability runs across all providers in a single dashboard: latency, error rates, cost per call, and model performance are visible without switching between vendor portals.

The audience Gateway targets is specific: engineering teams past the prototype stage that are shipping AI in production, or actively preparing to do so. Most of these teams have already built some version of an internal LLM routing service. What starts as a few lines of glue code for handling one provider typically grows into a maintenance project that pulls engineering attention away from the actual product. Gateway argues that this internal tooling is not differentiated work, and replacing it with a production-grade control plane frees teams to focus on what they are actually building.

We just built the #1 tool every AI team wants.

— Shensi Ding (@shensi) March 31, 2026

Introducing @merge_api Gateway: LLM routing, fallback, cost guardrails, and security, all in one place.

Everyone gets $10 free LLM usage on us to try it out.

RT+ comment “Gateway” → we’ll double your credits. pic.twitter.com/2fxGfPjFJe

Gateway ships with Python and TypeScript SDKs and is compatible with the existing OpenAI, Anthropic, and LangChain SDK clients. Teams already using any of those can point their client's base URL at the Gateway endpoint and pick up multi-provider routing and observability without changing their application code.

Test out Gateway for yourself!

Merge was founded in 2020 and is based in San Francisco. The company raised $75 million in a Series B round and built its core product around a Unified API for HRIS, CRM, and ATS, enabling B2B software teams to connect to dozens of third-party systems through a single integration rather than building each connector individually. Gateway is a separate product line from Merge Unified and Agent Handler, and moves the company into LLM infrastructure, a category that currently includes tools like LiteLLM, Portkey, and Cloudflare AI Gateway. Where much of that space is built around open-source proxies or managed endpoints, Merge is positioning Gateway around the governance and compliance demands of teams running AI in production at scale.