MemOS is now available as an open-source “memory operating system” for LLM-based agents, focused on long-term recall and stable persona across sessions. The project targets builders of AI companions, role-playing NPCs, and multi-agent systems that need agents to retain context over time without reloading full conversation history for every run.

Explore MemOS, an open-source Agent Memory framework that empowers AI agents with long-term memory

MemOS becomes essential once agents need to operate across sessions, users, or tasks while maintaining consistent behavior. As teams move from single-turn chatbots to agents that plan, call tools, and coordinate work, “memory” stops being a convenience feature and turns into a control surface that needs governance, auditing, and correction.

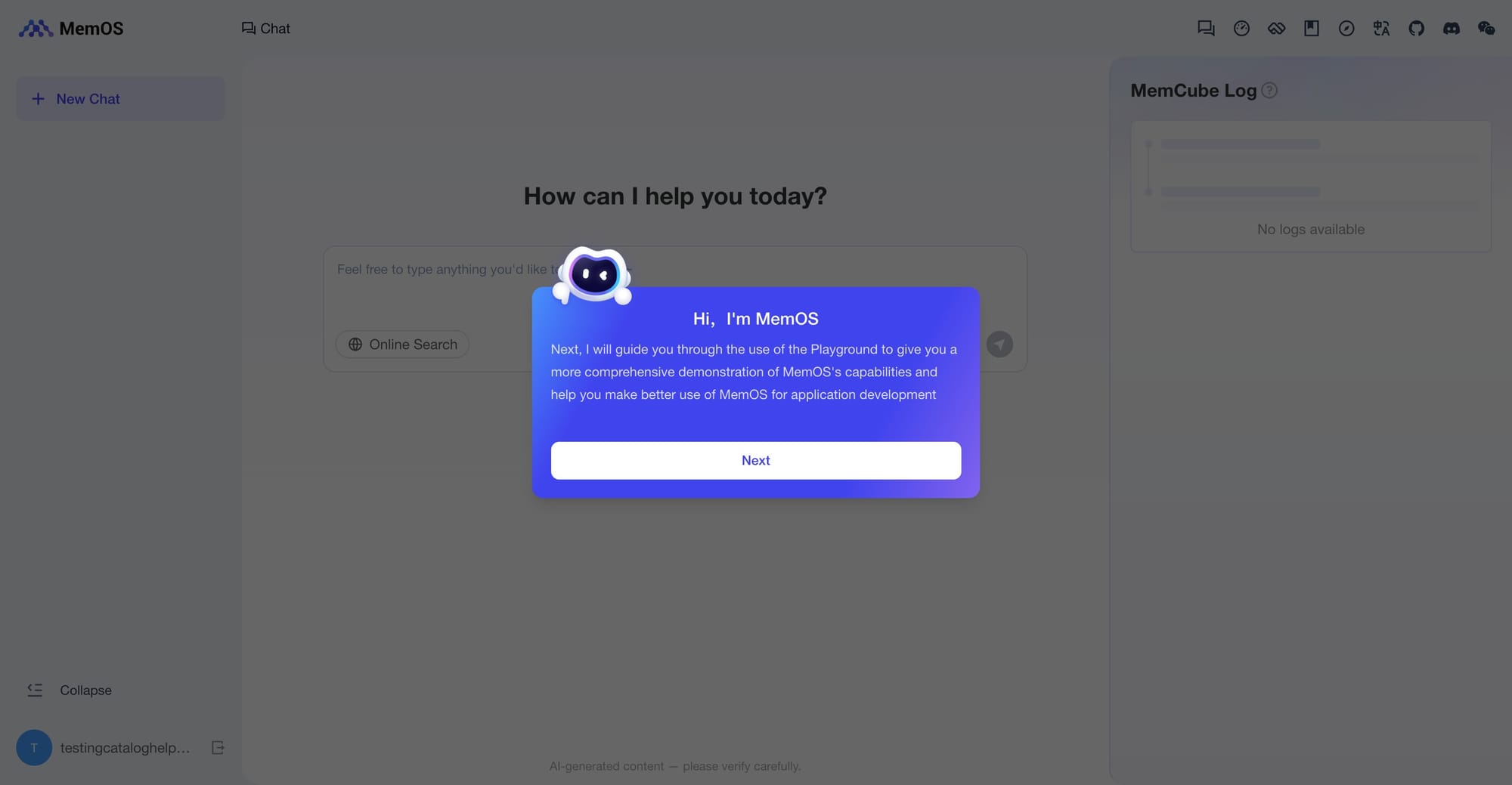

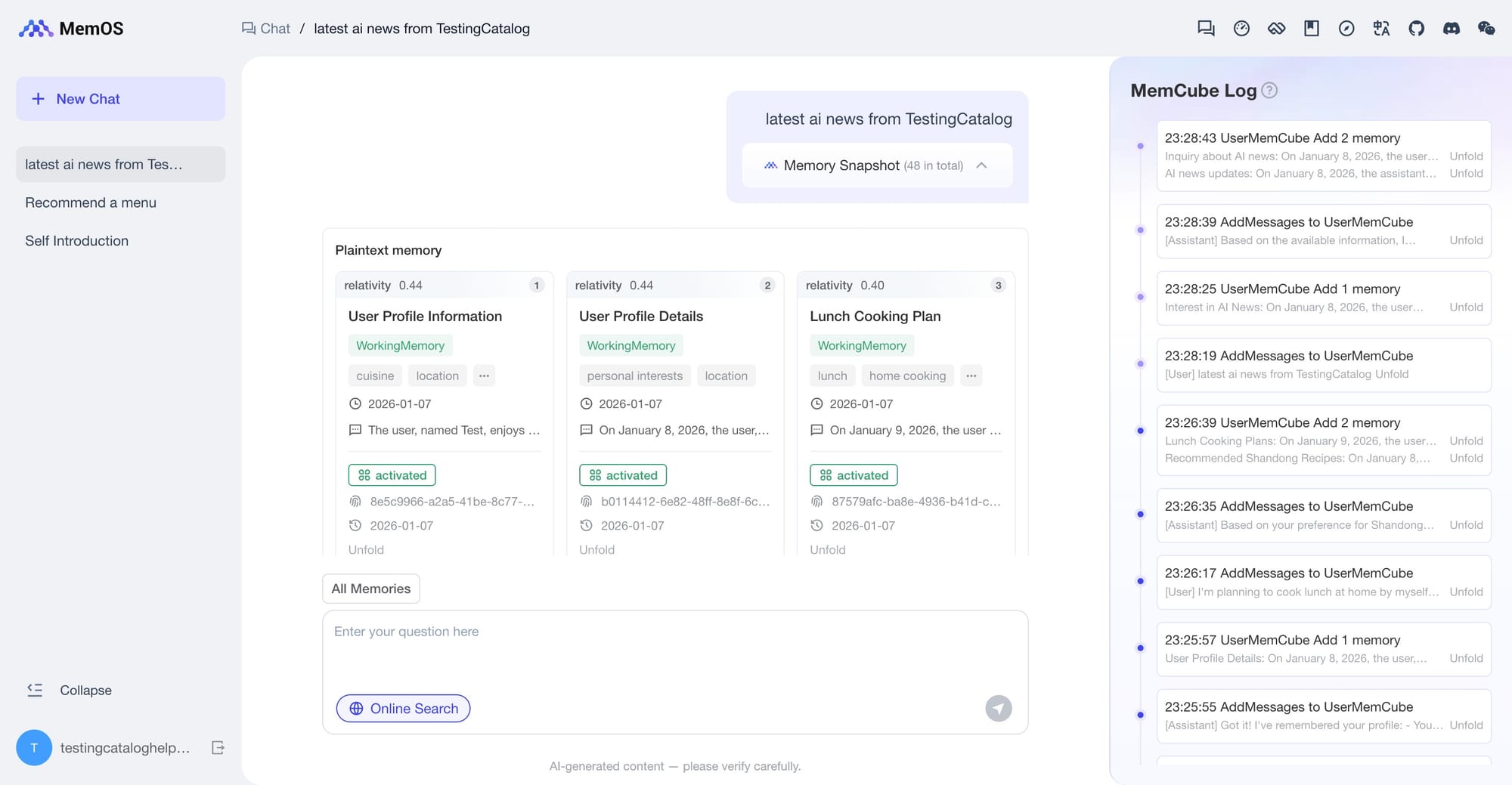

MemOS Playground

From a technical evolution standpoint, long-term memory started as something “baked into the model,” where behavior and recall were largely embedded in parameters. The next phase introduced external “notebooks,” where retrieval modules could fetch relevant snippets and append them to context. The agent era shifts the requirement again: agents need a responsible memory manager that treats memory as system state, with clear rules for what gets stored, how it is retrieved, how it is updated, and how it is deleted when it becomes wrong.

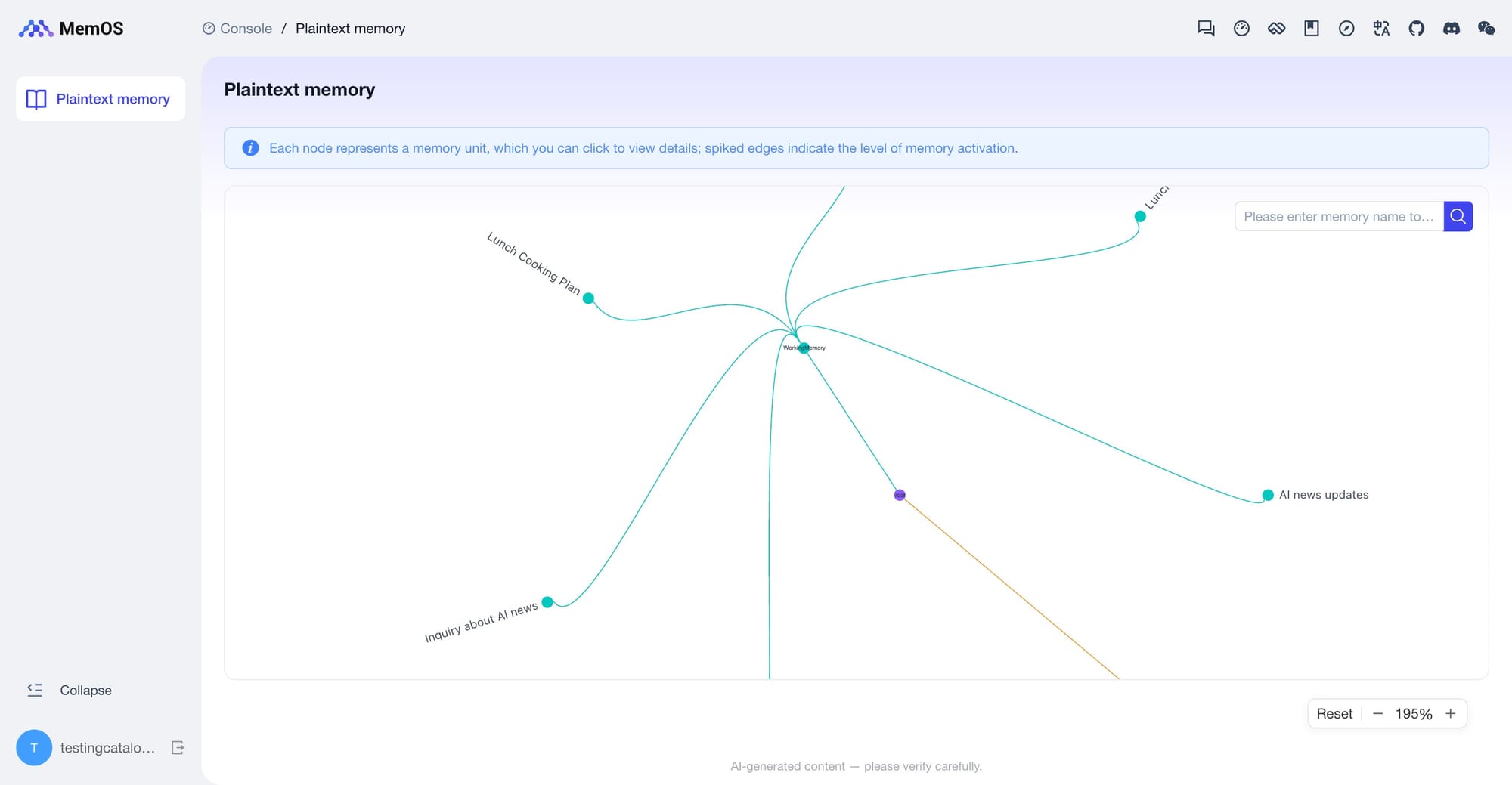

MemOS positions itself as that memory manager. Instead of treating memory as a passive store, it exposes a unified API that lets an agent write memories, search them, merge them, and revise them, while keeping personality and identity stable across runs. Unlike retrieval-first memory systems, MemOS treats memory as a managed system state, not just an index.

A major step in the latest MemOS release is visual understanding tied to unified memory management. MemOS can extract and process images and documents and manage them alongside standard text memory. Practically, this means an AI application can remember not only what a user said, but also the image they shared and the PDF they uploaded, then recall that information later when it becomes relevant to the task. This matters in real workflows where context is rarely pure text, like support tickets with screenshots, onboarding documents, or product specs stored as PDFs.

The Knowledge Base feature is the other pillar, because it bridges two kinds of knowledge that usually live in separate systems: static documents and dynamic, user-specific memory. In many products, “docs” live in a retrieval store while “memory” lives in a profile store, and developers end up writing glue code to reconcile them. MemOS brings both into a single memory layer so the agent can reason across them without special-case logic.

A concrete scenario is an internal assistant that needs to answer policy questions correctly and contextually. The Knowledge Base contains the employee handbook and policy documents. The memory layer contains user facts, such as Employee A works in Operations and Employee B works in Technology. When Employee A asks, “How many days of annual leave do I have?”, the agent first recalls the identity detail from memory, then retrieves the Operations-specific leave policy from the Knowledge Base, and finally composes an answer from both sources. This pattern generalizes to any situation where the same question yields different answers depending on role, region, entitlement, or project context.

To keep this system accurate over time, MemOS also supports a feedback loop that lets teams correct what the agent “knows” without rebuilding pipelines. Supports natural language feedback and modifications. In practice, when a user flags a policy as outdated, the system can mark the relevant Knowledge Base entry for review or revision, reducing drift between what the agent says and what is currently true.

MemOS also extends memory into tool usage, which is where many agents either waste time or repeat mistakes. Agents frequently call external tools, like search, databases, or internal APIs, and the outcome depends on details such as which tool was chosen, the parameters used, and what came back. MemOS can store the complete history of tool use as memory, including the decision to call a tool, its inputs, and its results, so the agent can reuse prior “experience” instead of relearning it every session. Over time, this enables patterns like “use a tool once, reuse the experience,” where the agent avoids redundant calls and converges on the right tool choices for a given user and task.

Behind the product, MemOS is developed as an open-source project by the MemTensor team under the OpenMem ecosystem, with a modular architecture intended to support multiple memory types and integrations. The focus is on giving builders a system-level memory layer that can fit companions, NPCs, and multi-agent systems without locking them into a single model or vendor-specific storage approach.

If you are evaluating memory layers for agents in production, the MemTensor/MemOS GitHub repo is the best place to start: scan the Stardust release notes, review the docs and API surface, and compare the design choices to your current retrieval and state approach. If it matches your stack, starring the repository is a quick signal that helps the maintainers prioritize fixes, expand integrations, and keep momentum with the community.