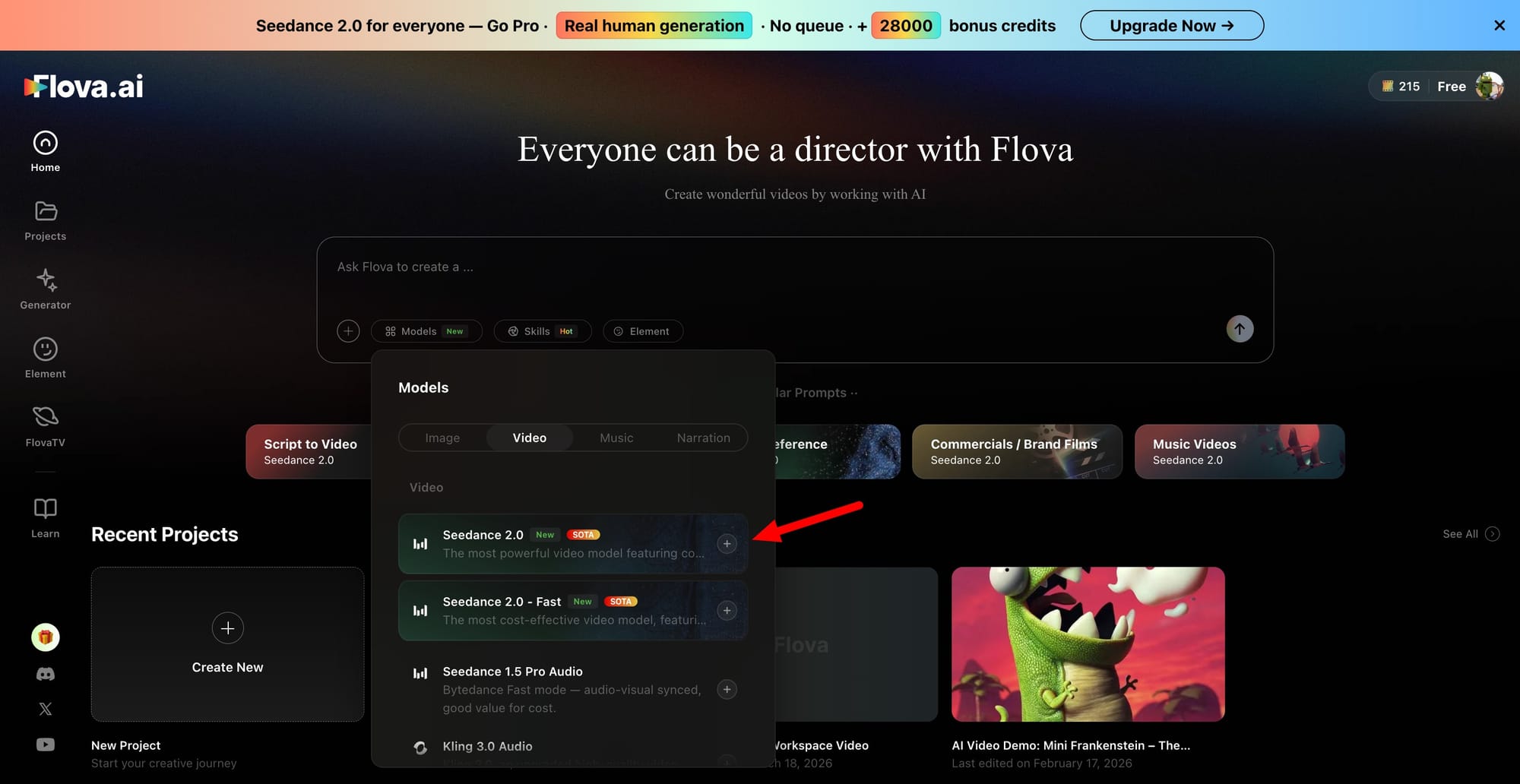

Flova has integrated Seedance 2.0, ByteDance's latest AI video generation model, making it available now inside the platform for creators building cinematic short dramas and long-shot video content. The integration ships with a Quick Access feature that lets users launch Seedance 2.0 or NanoBanana in a single click from the interface, with no additional setup required, a detail that separates it from other platforms where model access still involves configuration steps.

Seedance 2.0 runs on a unified multimodal architecture that accepts text, images, video references, and audio in a single generation flow. It produces 1080p output with natural motion, native audio, and multi-shot cuts without post-production layering. Character consistency holds across scene transitions for faces, clothing, and visual style stay stable from shot to shot, which is what makes it practical for short drama formats where continuity matters across cuts.

On the performance side, Flova's PRO subscribers get access to up to 50 concurrent generations for Seedance 2.0, while other models on the platform allow 10 concurrent runs. That throughput gap is meaningful for creators who work at volume or need to iterate quickly across multiple scenes. Generation is described as stable, fast, and cost-effective at scale, with the full pipeline running from storyboarding through to cinematic-level video output inside a single workflow, with no external tools or additional setups required.

Test out this model on FlovaAI!

Flova is positioned as an all-in-one AI video agent, combining text-to-video, image-to-video, storyboarding, timeline editing, voiceover, and music generation in a single interface alongside models including Sora 2, Veo 3.1, Kling AI, Hailuo 2.3, Midjourney, and now Seedance 2.0. The platform is currently in beta, with subscription pricing running at a significant discount relative to comparable providers in the market.