Perplexity has launched pplx-embed-v1 and pplx-embed-context-v1, two high-performance text embedding models designed specifically for large-scale retrieval tasks. The release is now public, targeting organizations and developers who require advanced semantic search and retrieval capabilities across massive datasets. The models are available at both 0.6B and 4B parameter sizes, with the smaller variants optimized for speed and efficiency, and the larger ones focused on maximizing retrieval quality. Users can access these models on Hugging Face under an MIT License and through Perplexity’s API, supporting deployment across multiple platforms including Transformers, SentenceTransformers, and ONNX.

Today we're releasing two embedding model families, pplx-embed-v1 and pplx-embed-context-v1.

— Perplexity (@perplexity_ai) February 26, 2026

These SOTA embedding APIs are designed specifically for real-world, web-scale retrieval.https://t.co/fUUasIGhYX

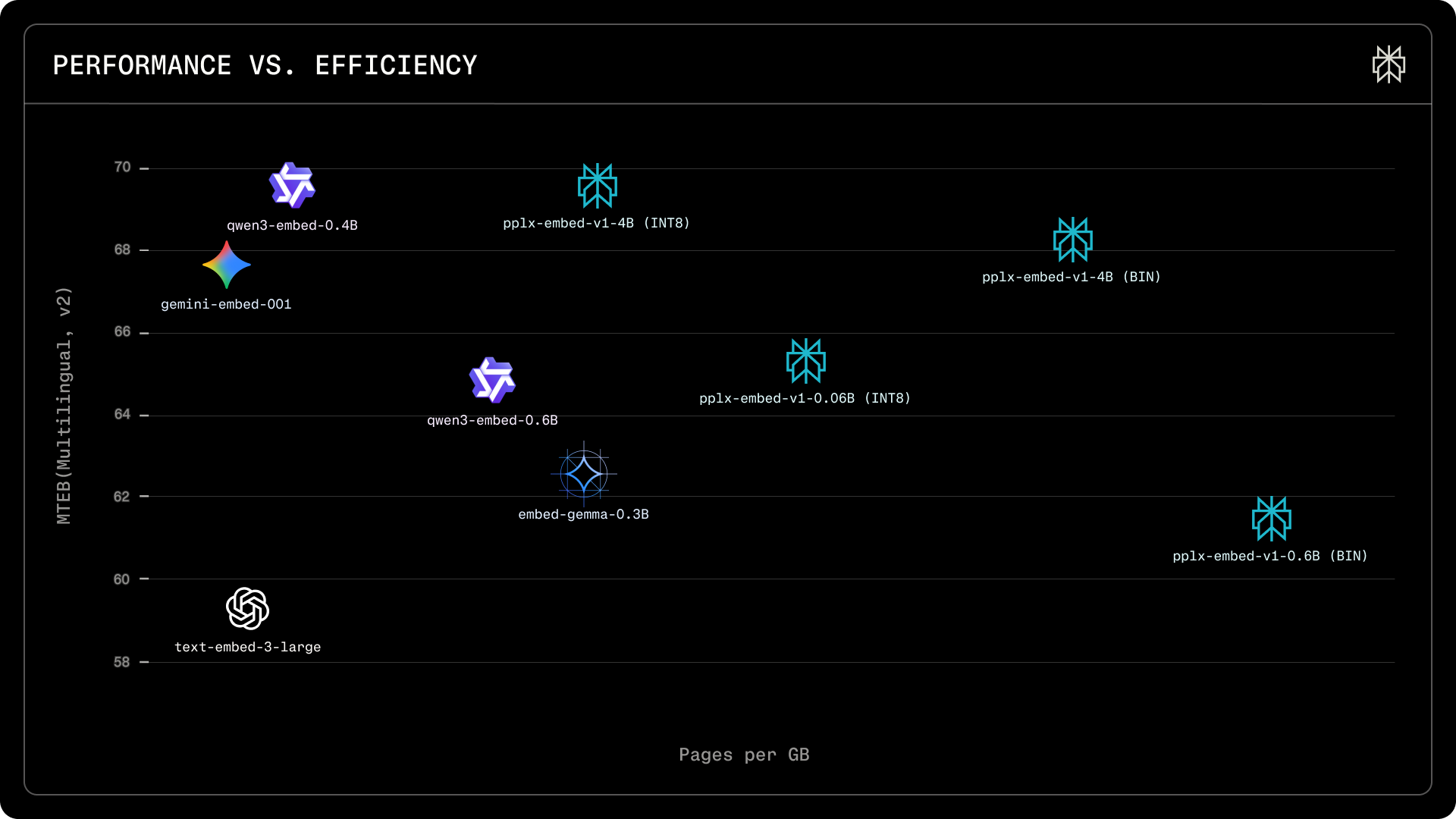

These new embedding models offer native INT8 and binary quantization, reducing storage by 4x and 32x compared to standard FP32, a key benefit for organizations operating at web scale. Unlike previous models, they do not require instruction prefixes, avoiding common pitfalls in real-world deployment. Both variants outperform competitors such as Qwen3-Embedding and Gemini-Embedding in multilingual, contextual, and real-world retrieval benchmarks, with the 4B binary model maintaining high retrieval accuracy while dramatically reducing storage demands. Industry observers note that early users have reported strong recall and efficiency in production pipelines. The models leverage a multi-stage training pipeline, including diffusion-based pretraining and contrastive learning, resulting in robust, context-aware embeddings suitable for retrieval-augmented generation and search applications.

Perplexity, the company behind this release, is recognized for its focus on retrieval and large-scale information access. The new models are a direct response to the challenges of web-scale document retrieval, building on their expertise in first-stage retrieval for downstream ranking systems. This move positions Perplexity at the forefront of large-scale embedding research, providing solutions that meet the needs of both speed-focused and quality-focused users.