OpenAI has rolled out GPT-5.4 mini and GPT-5.4 nano, pushing its GPT-5.4 family further down the cost and latency curve for developers building coding copilots, subagents, screenshot-driven automation, and other high-volume systems. Mini is the broader product play: it is live in the API, Codex, and ChatGPT, while nano is reserved for API users that need the smallest and cheapest GPT-5.4-class option for classification, extraction, ranking, and lightweight coding support. OpenAI is framing both as production models for workflows where response time shapes the product, not just a trimmed-down companion to its flagship stack.

GPT-5.4 mini is available today in ChatGPT, Codex, and the API.

— OpenAI (@OpenAI) March 17, 2026

Optimized for coding, computer use, multimodal understanding, and subagents. And it’s 2x faster than GPT-5 mini.https://t.co/DKh2cC5S3F pic.twitter.com/sirArgn37L

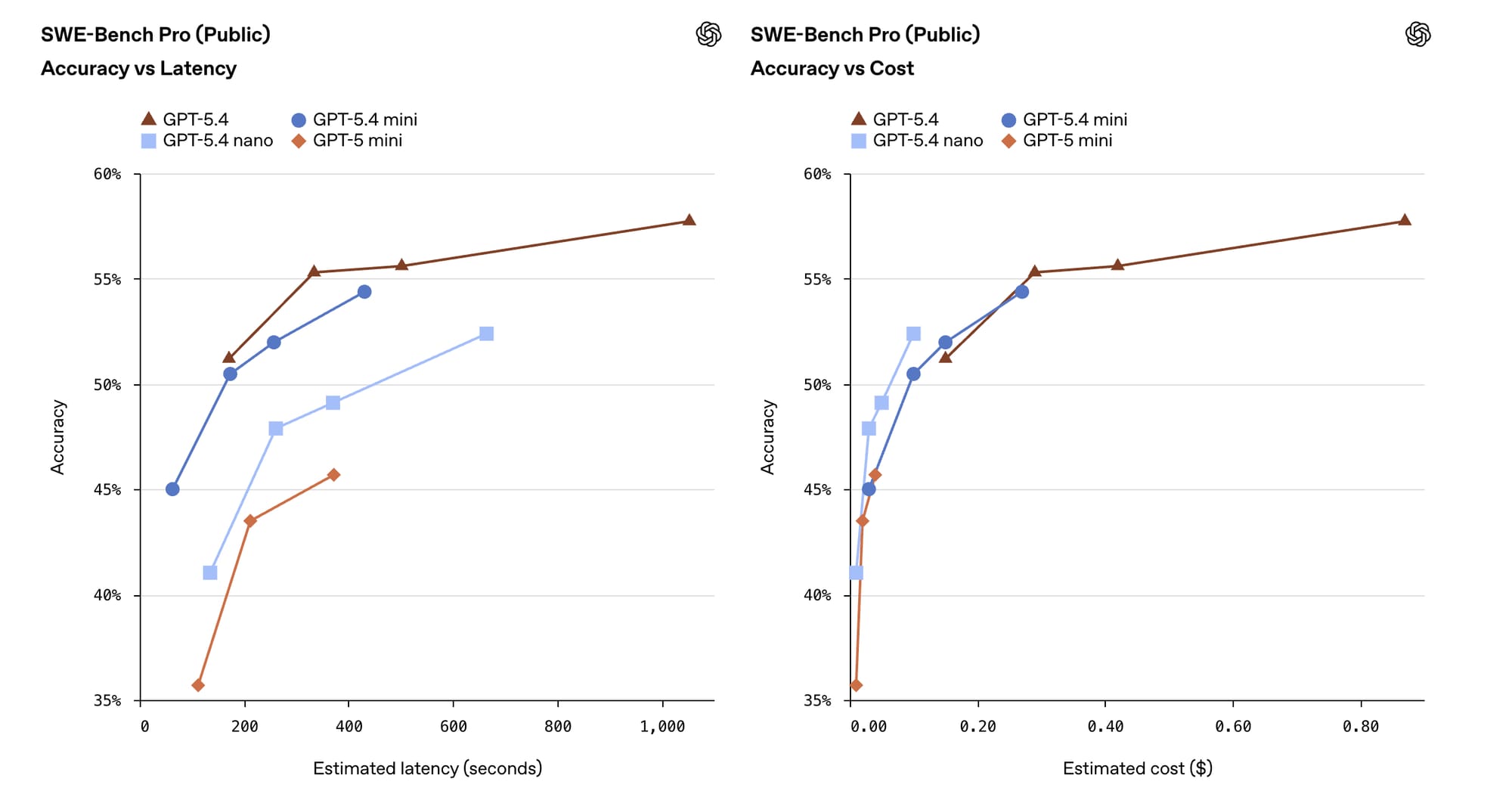

The benchmark spread shows why OpenAI is pushing this launch hard. The company says GPT-5.4 mini runs more than 2x faster than GPT-5 mini and closes much of the gap with full GPT-5.4 on coding and agent tasks, posting 54.4% on SWE-Bench Pro versus 45.7% for GPT-5 mini, and 72.1% on OSWorld-Verified versus 42.0% for GPT-5 mini. Nano sits lower in the stack, but still edges past GPT-5 mini on SWE-Bench Pro and Toolathlon while keeping the same 400,000-token context window and 128,000-token max output as mini. Both models take text and image inputs, support structured outputs and function calls, and are designed for systems that split planning and execution across multiple agents.

Pricing is where the release gets sharper. GPT-5.4 mini is priced at $0.75 per million input tokens and $4.50 per million output tokens, while GPT-5.4 nano lands at $0.20 and $1.25. In Codex, mini consumes 30% of the GPT-5.4 quota, letting OpenAI push cheaper subagents into codebase search, file review, and other narrower jobs. In ChatGPT, mini is available to Free and Go users through the Thinking entry in the plus menu, while higher-tier users mainly see it as a fallback for GPT-5.4 Thinking. Microsoft also moved immediately to make both models available in Foundry, with Data Zone US live now and Data Zone EU listed as coming soon.

The launch also clarifies OpenAI’s platform strategy. GPT-5.4 is now the company’s default model for serious reasoning, coding, and agentic workloads, replacing GPT-5.2 in the API and GPT-5.3-Codex inside Codex. Mini and nano extend that structure rather than dilute it: the flagship plans, the smaller models execute, and the stack stretches across ChatGPT, Codex, the API, and partner clouds. OpenAI is also leaning on early customer feedback to support the case. Hebbia said GPT-5.4 mini matched or beat competing models on several output tasks and citation recall at lower cost, while Microsoft is pitching the pair as the low-latency layer for production agents that need to retrieve, route, and act in near real time.