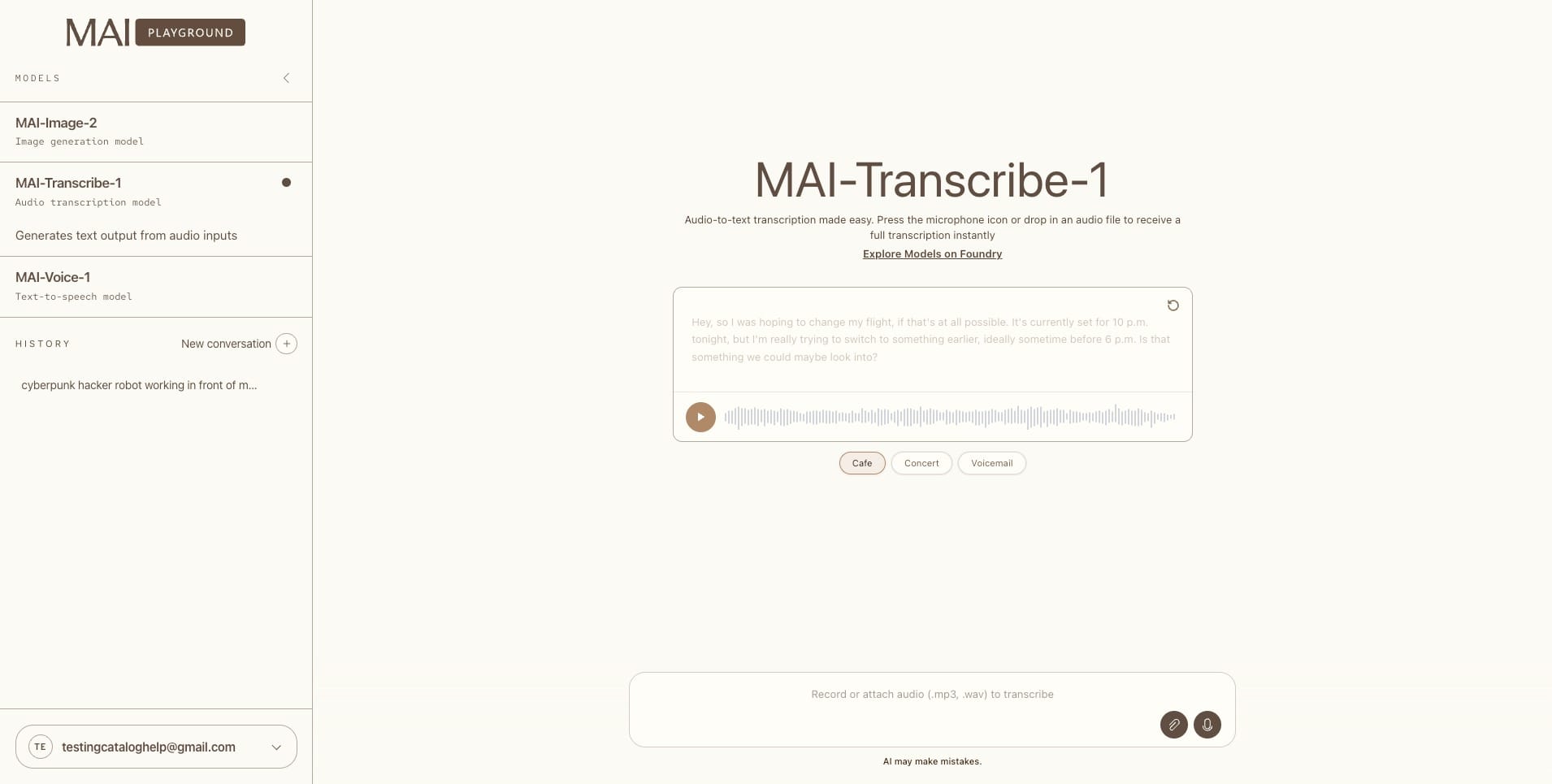

Microsoft is expanding access to its in-house MAI stack by introducing three models into Microsoft Foundry: MAI-Transcribe-1 for speech-to-text, MAI-Voice-1 for speech generation and custom voices, and MAI-Image-2 for image generation. The company states that all three models are now available in Foundry, while MAI Playground remains US-only for testing. Pricing begins at $0.36 per hour for transcription, $22 per 1 million characters for voice, and $5 per 1 million text-input tokens plus $33 per 1 million image-output tokens for image generation.

We’re bringing our growing MAI model family to every developer in Foundry, including …

— Satya Nadella (@satyanadella) April 2, 2026

· MAI-Transcribe-1, most accurate transcription model in world across 25 languages

· MAI-Voice-1, natural, expressive speech generation

· MAI-Image-2, our most capable image model yet

Start… pic.twitter.com/p0DZZcAUZ4

The most significant change is that Microsoft is no longer restricting these models mainly to Copilot-style products. MAI-Voice-1 had already been featured in Copilot Daily, Podcasts, and Copilot Labs in 2025, and MAI-Image-2 had launched in MAI Playground on March 19, 2026. The change on April 2 is the broader developer access through Foundry, which places Microsoft’s media models directly in front of enterprise builders and agent developers, rather than limiting them to product demos.

MAI-Transcribe-1 appears to be the most aggressive part of the release. Microsoft claims it leads the FLEURS benchmark across the top 25 most-used languages and surpasses Scribe v2, Whisper-large-v3, GPT-Transcribe, and Gemini 3.1 Flash on that test set. The model card notes that it is tuned for noisy real-world audio, supports WAV, MP3, and FLAC, and is designed for captions, meeting notes, call analysis, accessibility workflows, and voice agents. Microsoft also mentions that real-time transcription, diarization, and context biasing are not yet available but are planned for a future release.

MAI-Voice-1 is being positioned as the speech layer for the next wave of voice agents. Microsoft states it can generate 60 seconds of audio in one second and now allows customers to create a custom voice in Foundry from a few seconds of audio. This aligns with Microsoft’s broader Voice Live initiative, where speech recognition, generative AI, and speech synthesis are combined into one stack for low-latency voice agents.

MAI-Image-2 is targeted at creative and commercial work rather than casual image generation. Microsoft claims the model is built for photographers, designers, and visual storytellers, with an emphasis on natural lighting, skin tones, texture, and in-image text. Foundry documentation lists PNG output, 32K context, and image sizes up to a total of 1,048,576 pixels, while the model card states it outperformed MAI-Image-1 in categories including photorealism, branding, portraits, and text rendering. Microsoft has already begun integrating it into Copilot, with phased rollouts also underway in Bing and PowerPoint, and WPP is named as an early enterprise partner.