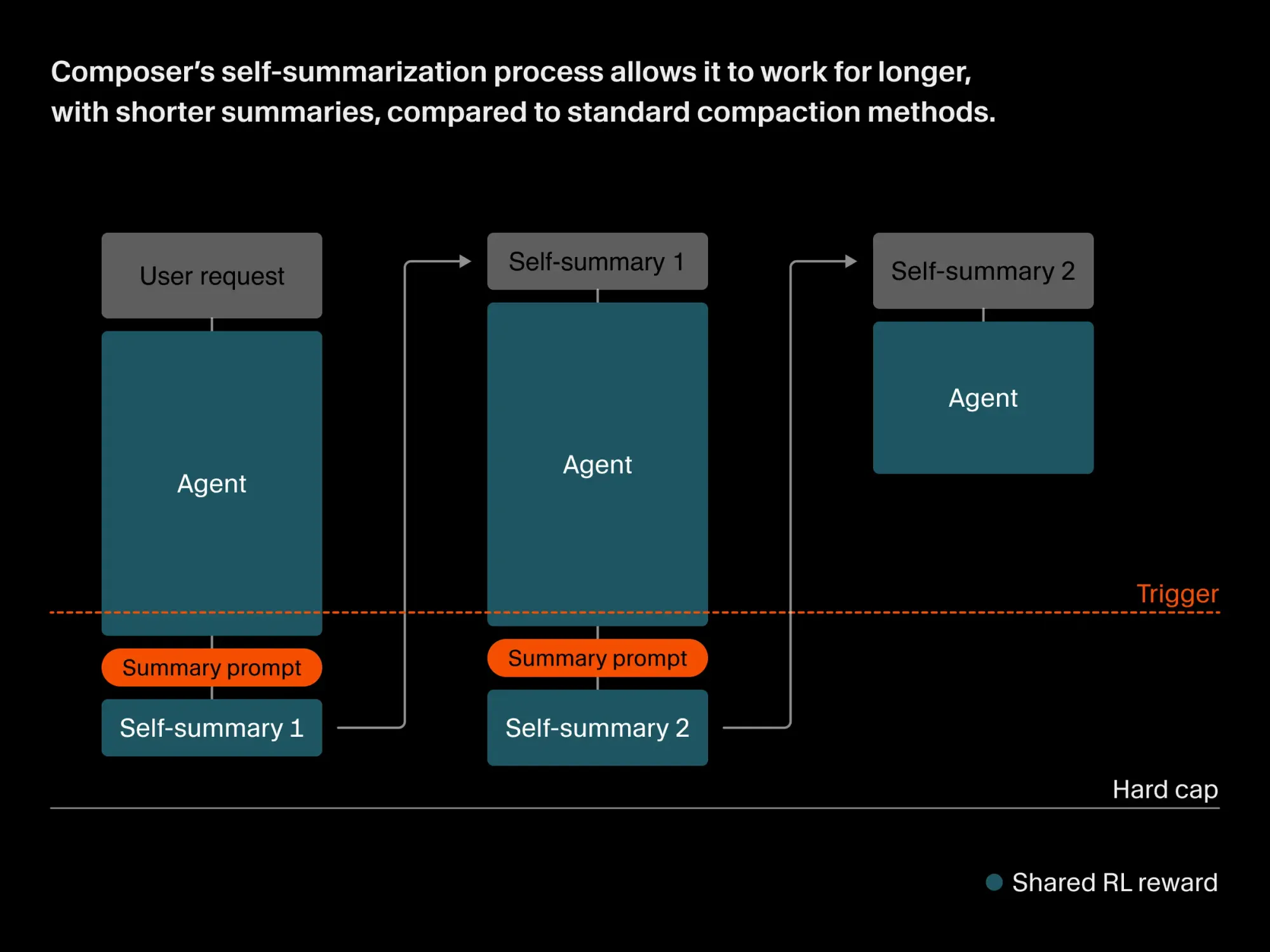

Composer, developed by Cursor, is a new AI model tailored for agentic coding, specifically designed to address the challenges of long-horizon tasks. This model incorporates a reinforcement learning training process called self-summarization, which enables it to retain and process information across much longer sequences than traditional context window limits. The release targets developers and researchers who require AI to assist with complex coding tasks that demand sustained reasoning and context retention over hundreds of steps.

We trained Composer to self-summarize through RL instead of a prompt.

— Cursor (@cursor_ai) March 17, 2026

This reduces the error from compaction by 50% and allows Composer to succeed on challenging coding tasks requiring hundreds of actions. pic.twitter.com/ryfalZHLZS

Currently, Composer is evaluated internally using CursorBench and Terminal-Bench 2.0, with performance metrics indicating higher accuracy and efficiency in token usage compared to established prompt-based compaction methods. The model achieves compaction with summaries averaging 1,000 tokens, significantly reducing context loss and computational overhead.

Cursor, the company behind Composer, is focused on advancing agentic AI capabilities in software engineering scenarios. The company's approach integrates compaction directly into the training loop, allowing Composer to learn which contextual information is most critical to retain. By leveraging reinforcement learning rewards for effective self-summaries, Cursor positions Composer as a robust alternative to current compaction strategies, which often rely on lengthy, manually crafted prompts and are susceptible to information loss.

Early testing shows Composer solving problems that have stumped other large models, illustrating its potential for tackling more complex, multi-step programming challenges in future releases.