Utopai Studios has opened public access to PAI, a cinematic AI video model and workflow built for sustained, multi-scene storytelling. The release became available on March 5, 2026, with Utopai positioning PAI for creators and studios who want to take narrative video from early development through final output without relying on a patchwork of separate tools.

The pitch targets a gap in current AI video use: many systems perform best on short clips or visual experiments, while longer narrative work depends on continuity across scenes and the ability to revise sequences over time without losing the original creative intent. At the same time, professional teams have treated copyright exposure, character ownership, and readiness for public release as gating factors for whether AI video can move beyond testing and into production.

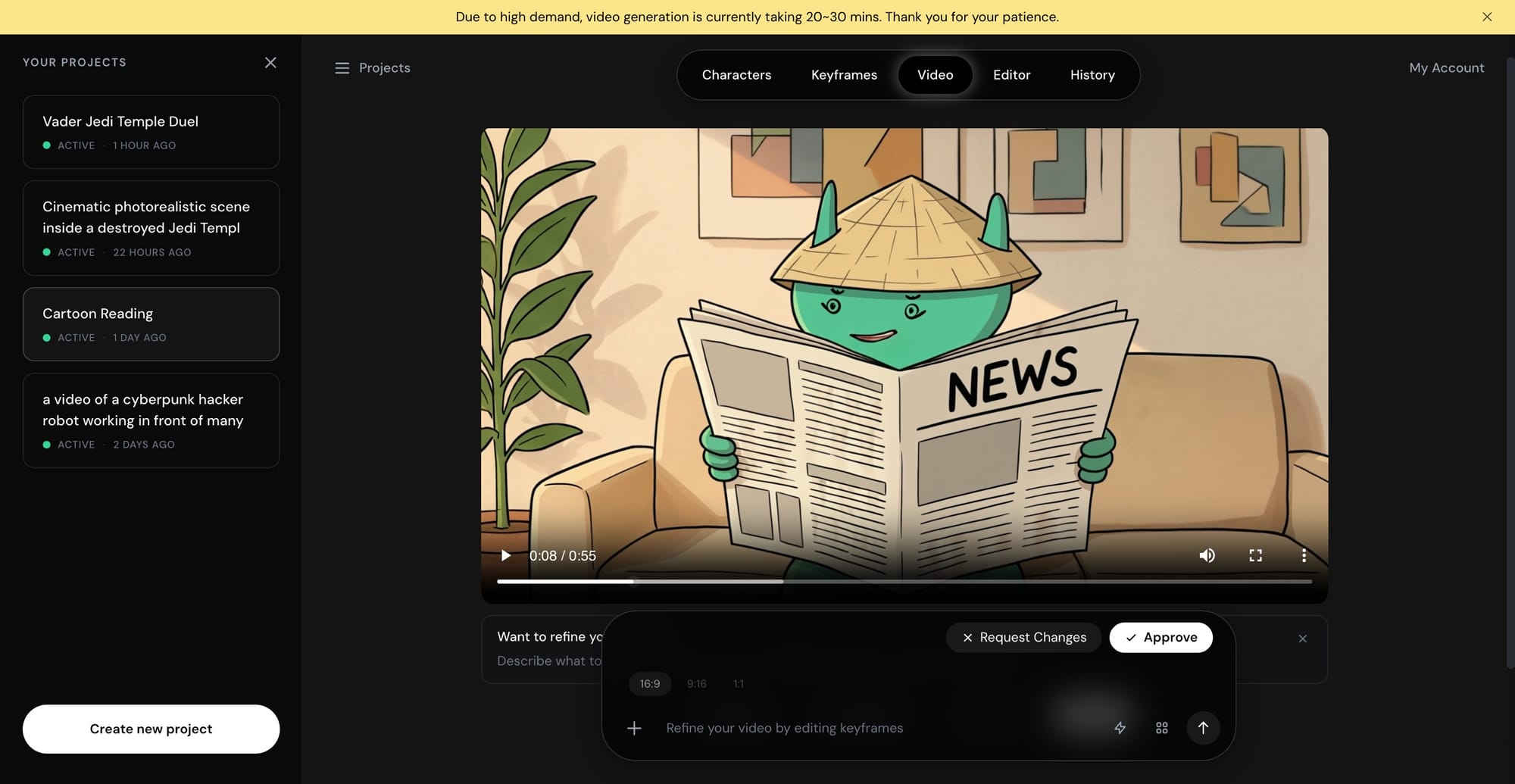

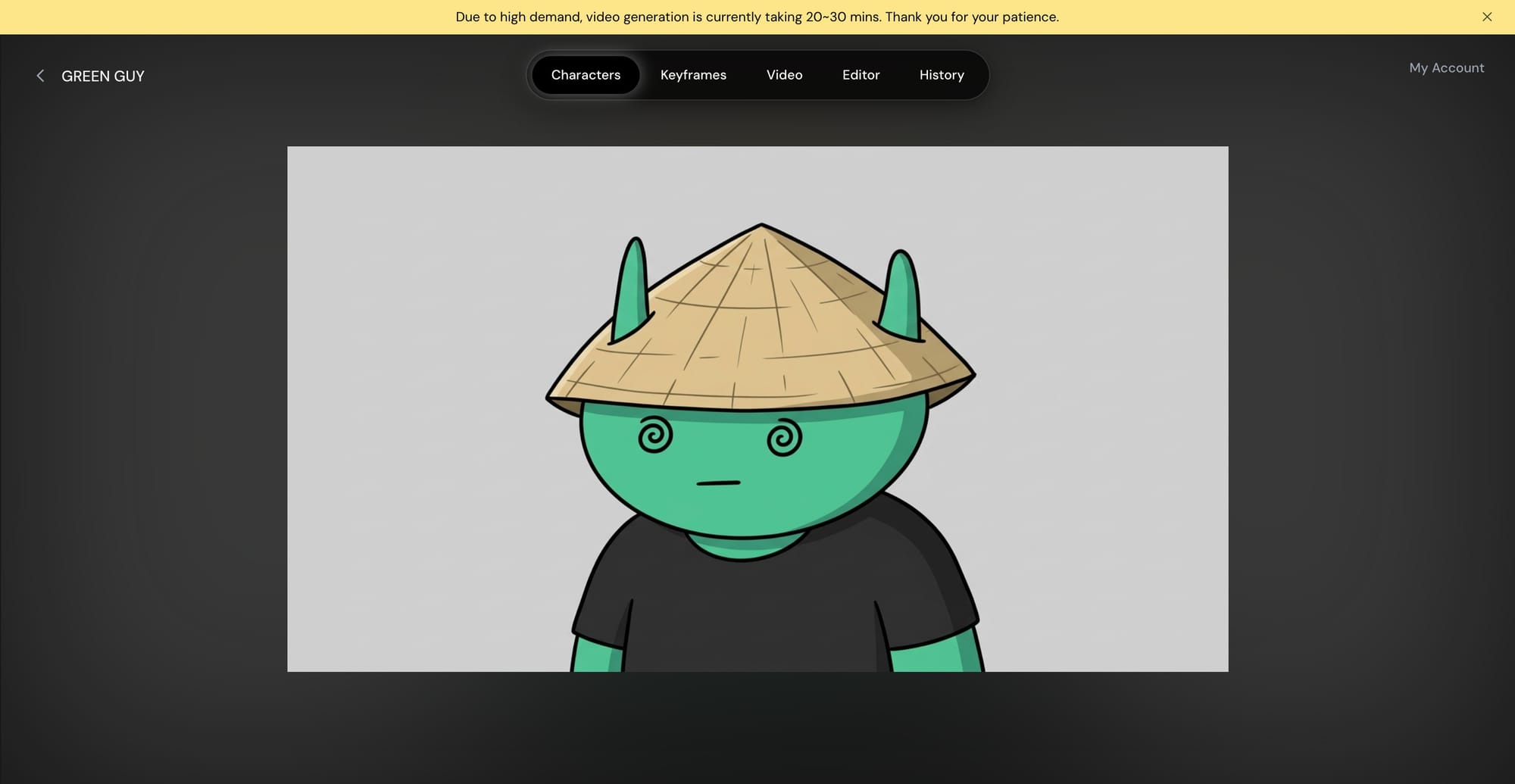

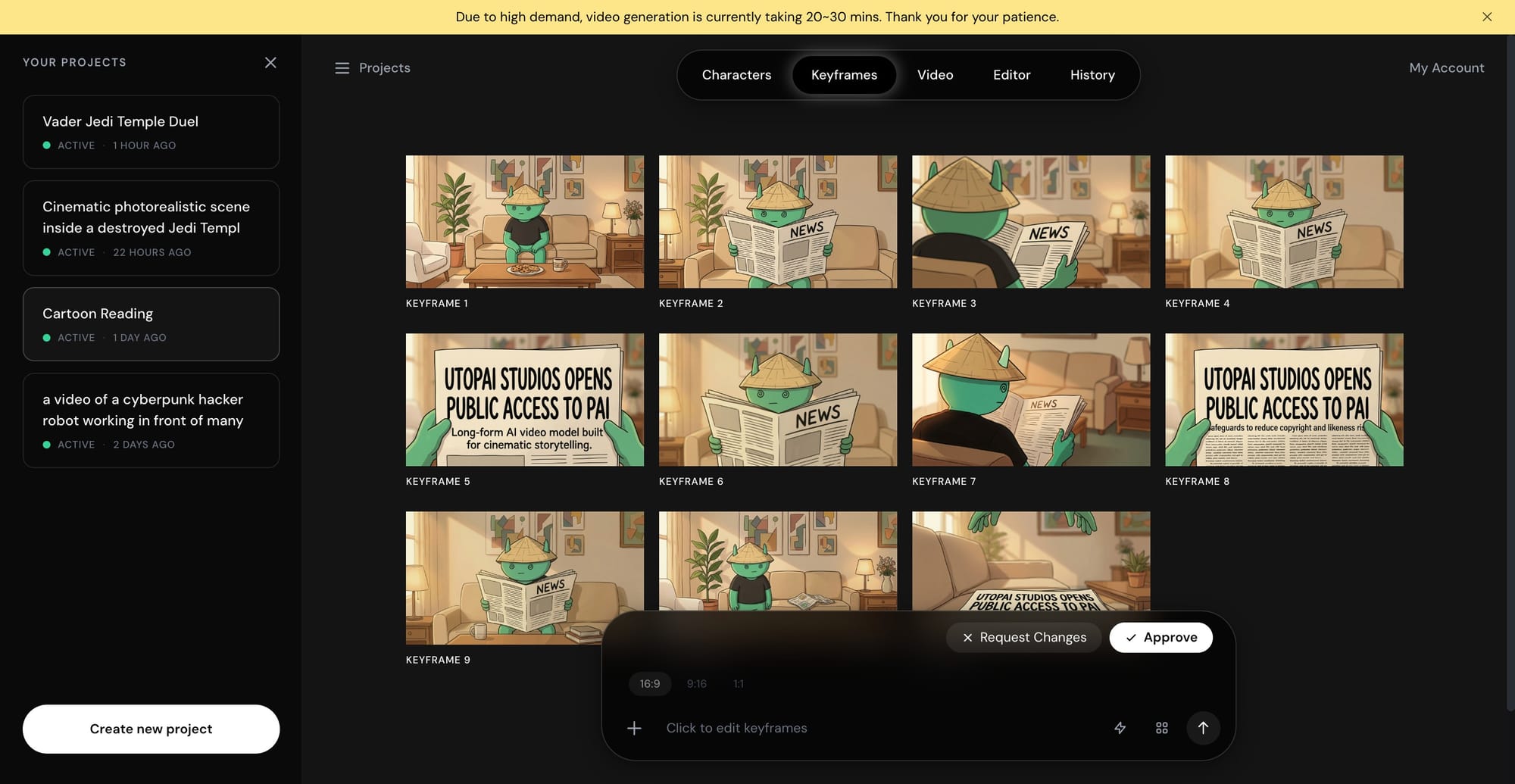

PAI is designed around story continuity and iterative control. Utopai says the system can keep characters and environments stable across a multi-shot sequence, reducing visual drift that breaks longer arcs. It also supports story-level editing, letting creators revise performance, motion, and composition at the frame level or across the full video rather than restarting an entire sequence for each change. In its launch configuration, PAI supports up to 16 shots in a single narrative flow, outputs up to one minute, and resolution up to 4K. The workflow is built for multi-turn iteration via natural-language direction so teams can refine a sequence in steps while keeping prior context inside the same session.

A second pillar is infringement risk management. Utopai says PAI blocks generation against copyrighted IP, protected characters, and the likeness of public figures at the workflow level. The intent is to reduce accidental infringement while creators develop original worlds and characters intended for distribution, especially when multiple collaborators iterate quickly and the boundary between reference and replication can get blurry.

Utopai Studios is based in Mountain View, California, and describes itself as a video storytelling technology hub focused on cinematic generation models and agentic workflows. The company frames PAI as infrastructure for a creator economy where new IP is increasingly developed outside traditional studio pipelines, with audiences forming around worlds and characters before projects enter conventional production. Utopai says its leadership team includes veterans from Google Research, Meta Superintelligence, Amazon AGI, Adobe Firefly, and film and technology organizations, aligning the product around both model development and production constraints.

Before the public release, Utopai says PAI was used internally across its film and television development workflows. The model has also been used as part of Utopai East, a joint venture formed after the acquisition of Seoul-based Alquimista Media, where the company says it is developing a slate of 15 film and TV projects. With PAI now publicly available, Utopai is effectively shifting that internal narrative pipeline into the hands of independent creators and professional teams looking for longer-form, revision-friendly AI video workflows built for distribution.