OpenAI has introduced GPT-5.4, a new generation of its flagship AI model designed to extend the capabilities of large language models across reasoning, coding, multimodal understanding, and agent-based workflows. The release marks the latest step in the GPT-5 model family and reflects the company’s push toward AI systems that can operate as more capable assistants for developers, enterprises, and everyday users. GPT-5.4 is being integrated across OpenAI’s ecosystem, including ChatGPT and the API platform, allowing developers and organizations to incorporate the model into applications ranging from software development tools to enterprise automation systems.

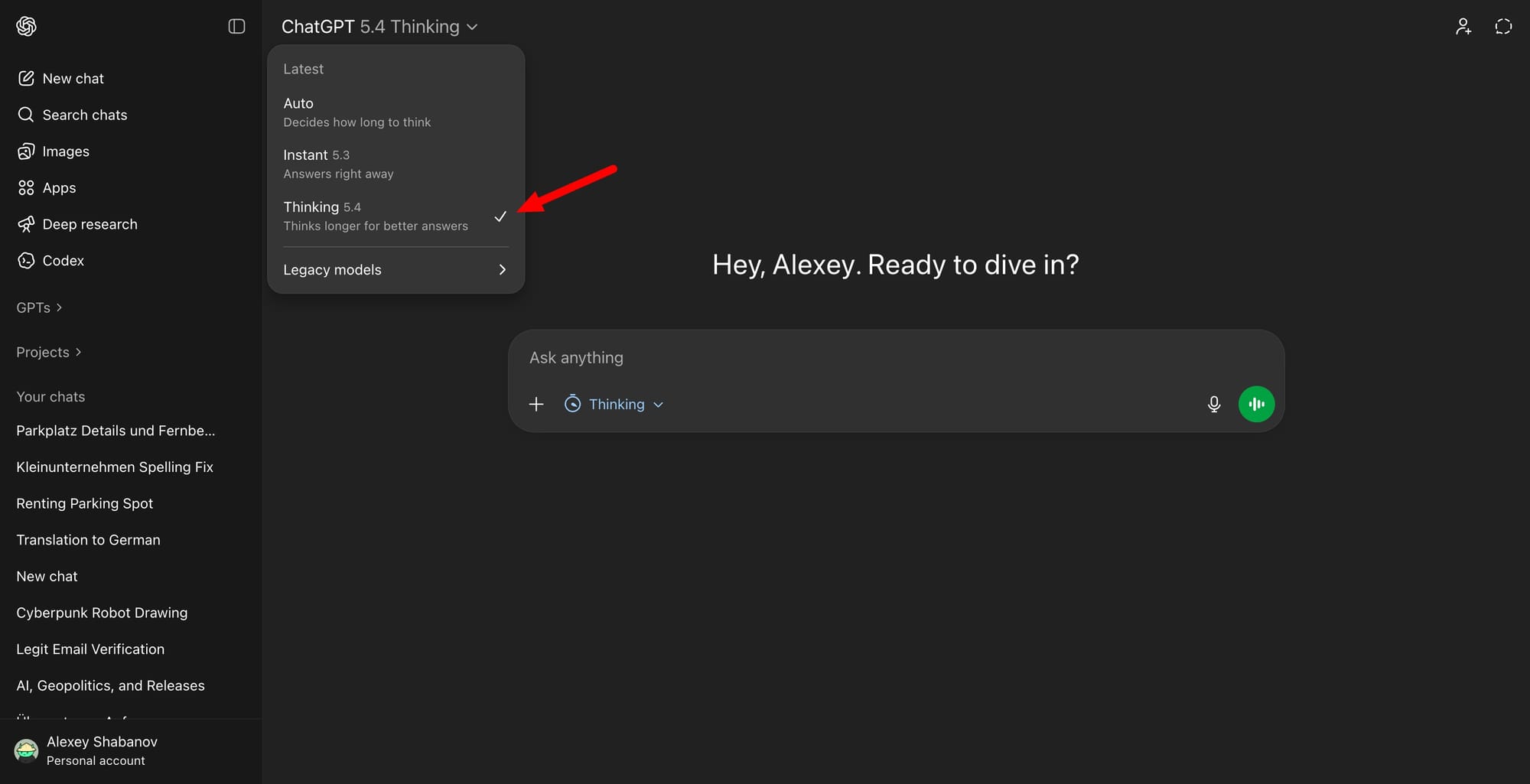

GPT-5.4 Thinking and GPT-5.4 Pro are rolling out now in ChatGPT.

— OpenAI (@OpenAI) March 5, 2026

GPT-5.4 is also now available in the API and Codex.

GPT-5.4 brings our advances in reasoning, coding, and agentic workflows into one frontier model. pic.twitter.com/1hy6xXLAmJ

The model builds on the architecture and training approach introduced with earlier GPT-5 variants while expanding performance in several key areas. GPT-5.4 is designed to handle longer contexts, more complex reasoning tasks, and multi-step problem solving with higher reliability. Improvements in coding ability are a central focus of the release, enabling the model to generate, review, and modify code across multiple programming languages while maintaining project-level context. This positions GPT-5.4 as a foundation for advanced AI coding agents capable of coordinating tasks, debugging software, and assisting with large codebases.

Codex got more speed.

— OpenAI Developers (@OpenAIDevs) March 5, 2026

With /fast mode, GPT-5.4 runs 1.5x faster with the same intelligence and reasoning.

Move through coding tasks, iteration, and debugging while staying in flow. pic.twitter.com/Xlzmeozzc4

GPT-5.4 also strengthens multimodal capabilities, enabling the model to work with text, images, and structured data within a single reasoning process. These improvements allow developers to build applications where the model can interpret diagrams, analyze screenshots, or process visual information while generating written explanations or executable instructions. The architecture is optimized for tool use, meaning the model can call external systems, access APIs, and orchestrate workflows as part of broader automated processes.

The release is aimed at a wide spectrum of users, from individual developers building AI-powered tools to large organizations deploying AI across internal workflows. OpenAI positions GPT-5.4 as a core model for agentic systems, where AI agents can plan tasks, execute actions, and coordinate with external tools. This reflects a broader shift in the AI industry toward autonomous or semi-autonomous software agents that assist with complex digital tasks.

GPT-5.4 and GPT-5.4 Pro from @OpenAI on ARC-AGI Semi Private

— ARC Prize (@arcprize) March 5, 2026

ARC-AGI-2:

- GPT-5.4: 74.0%, $1.52/task

- GPT-5.4 Pro: 83.3%, $16.41/task pic.twitter.com/vpBlCIDrUb

OpenAI, the company behind GPT-5.4, continues to focus on advancing frontier AI systems while expanding commercial access through APIs and consumer products. The organization has developed successive generations of GPT models that are widely used across industries for tasks including programming, research, customer support automation, and content generation. With GPT-5.4, the company is further pushing toward AI systems that combine reasoning, multimodal perception, and tool integration in a unified platform designed to support increasingly complex real-world workflows.