Luma has introduced UNI-1, a new model that combines visual understanding and image generation inside a single system, marking a shift from separate pipelines toward one model that can interpret instructions, reason through constraints, and then render results. The company is positioning it as its first unified understanding-and-generation model, built to handle text and images in one interleaved sequence rather than treating analysis and creation as separate tasks. This is significant because Luma is not pitching UNI-1 as another image model focused only on aesthetics. It is framing the release as groundwork for systems that can reason, imagine, and eventually extend into video, voice agents, and interactive world simulators.

Introducing Uni-1, Luma’s first unified understanding and generation model, our next step on the path towards unified general intelligence.https://t.co/QjdrnYoWe5 pic.twitter.com/y3E984T2Mq

— Luma (@LumaLabsAI) March 5, 2026

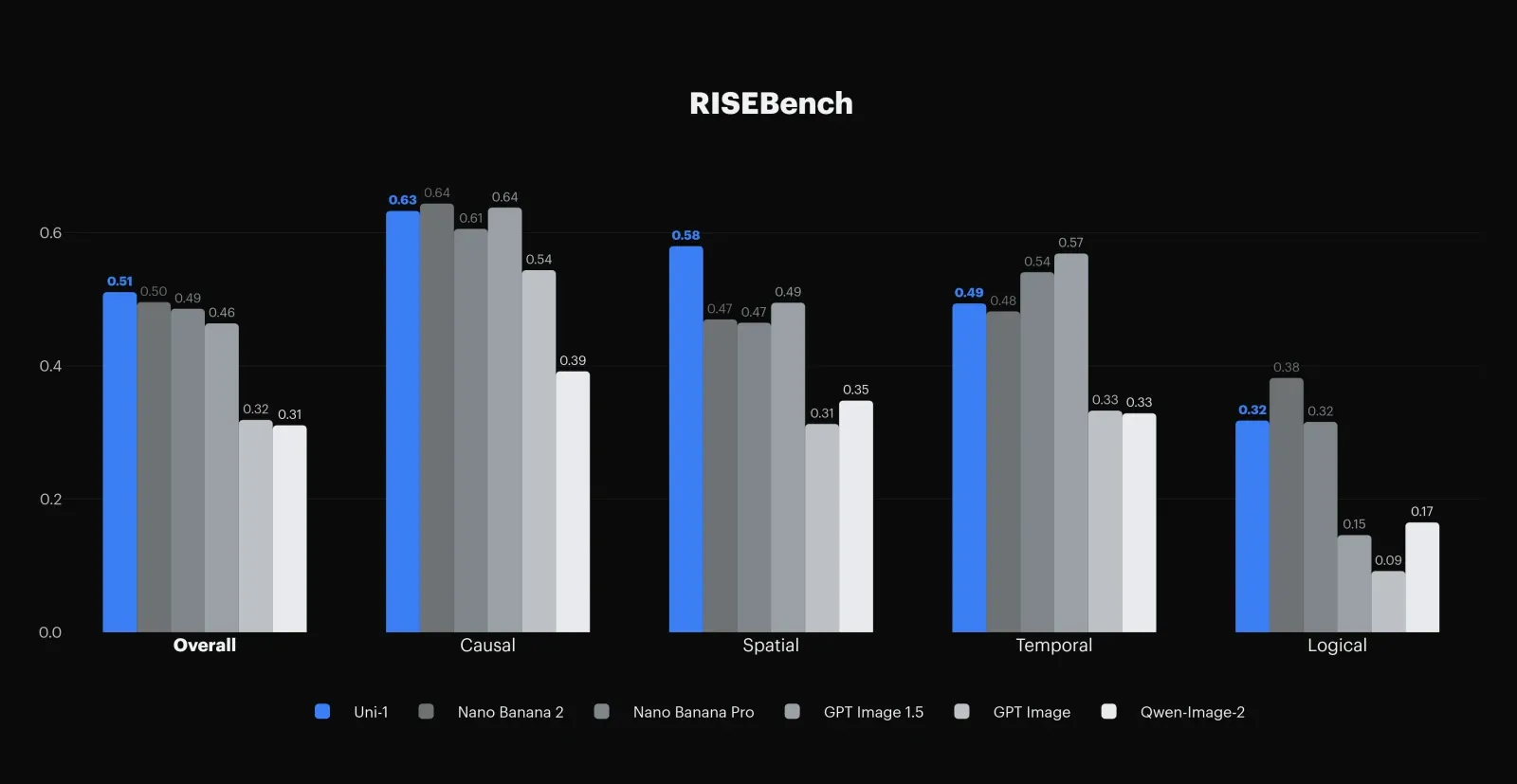

The pitch centers on two claims. First, UNI-1 performs structured reasoning before and during image synthesis, breaking down instructions and resolving spatial, causal, temporal, and logical constraints. Luma says the model delivers state-of-the-art results on RISEBench, a benchmark for reasoning-informed visual editing, and showcases examples built around continuity across time, scene plausibility, identity preservation, and reference-guided control. Second, Luma argues the reverse also holds: training the model to generate images improves its fine-grained visual understanding, including reasoning over regions, objects, and layouts. In practice, that gives UNI-1 a broader profile than a standard text-to-image model, with Luma highlighting use cases such as:

- Multi-shot storyboard generation

- Reference-based composition control

- Multi-turn refinement

- Style transfer

- Multilingual prompts

- Culture-aware outputs across memes, manga, and other aesthetics

The launch also says a lot about where Luma is trying to go as a company. Over the past few years, it moved from scene reconstruction to 3D generation and then to video diffusion, and UNI-1 is being presented as the next layer in that progression. Luma’s broader platform already bundles image, video, audio, and agent-style creative workflows, with UNI-1 now listed in its product and pricing stack.

The pricing structure includes free trial credits, paid individual plans, and separate enterprise options, which indicates UNI-1 is being folded into Luma’s commercial platform rather than treated as a closed research demo. For creators, marketers, studios, and enterprise teams, the message is direct: Luma wants to own the workflow from understanding a prompt to generating and iterating on visual media with tighter control and stronger reasoning built in from the start.