Meta’s Vibes appears to be transitioning from an AI video feed into a dedicated creation studio. After earlier indications of a standalone product, the web version now seems much closer to a launch-ready state, with project creation, image generation, video generation, and timeline editing already functioning in practice.

BREAKING 🚨: Meta silently launched its new standalone Vibes AI video editor!

— TestingCatalog News 🗞 (@testingcatalog) March 8, 2026

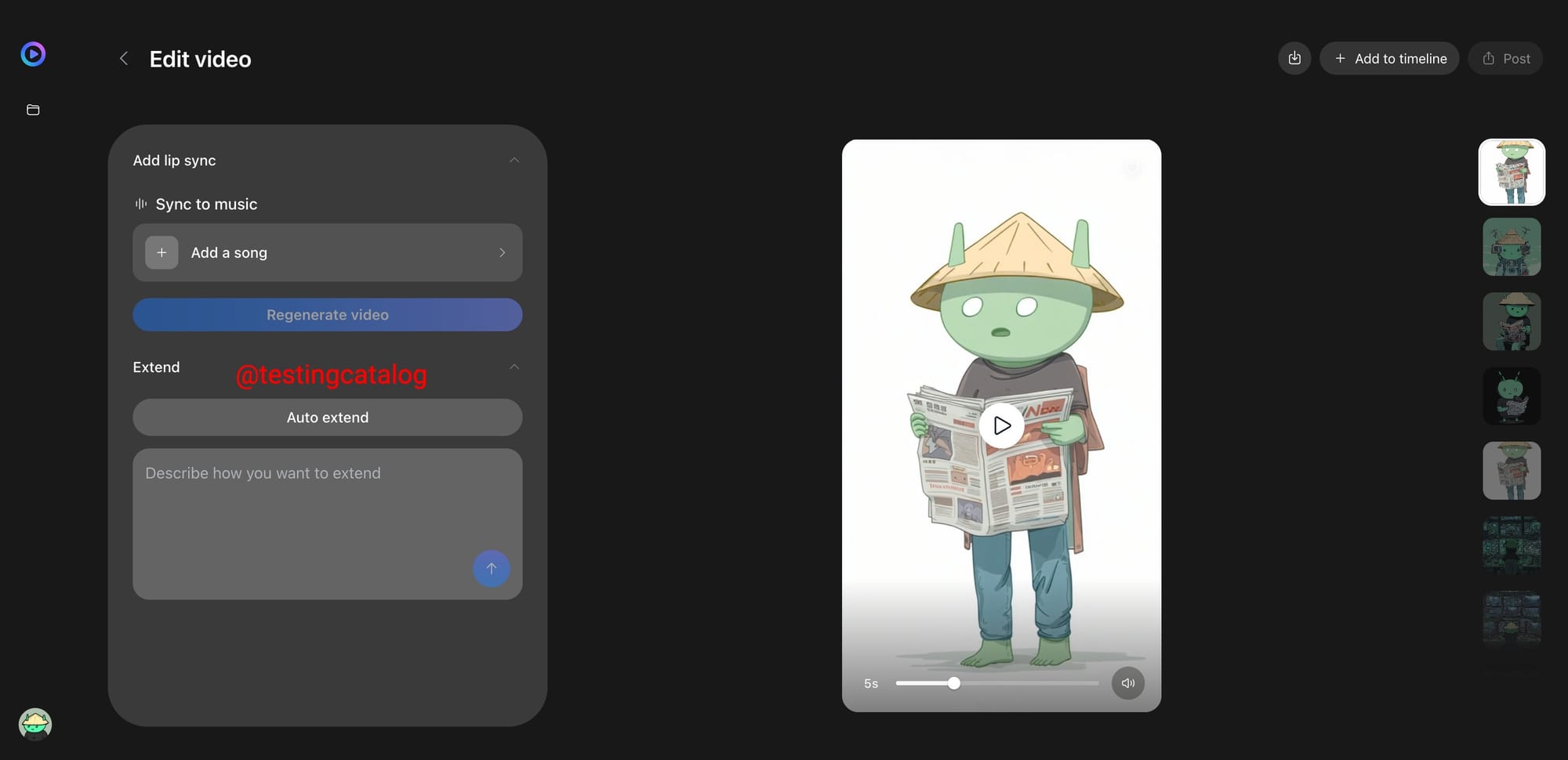

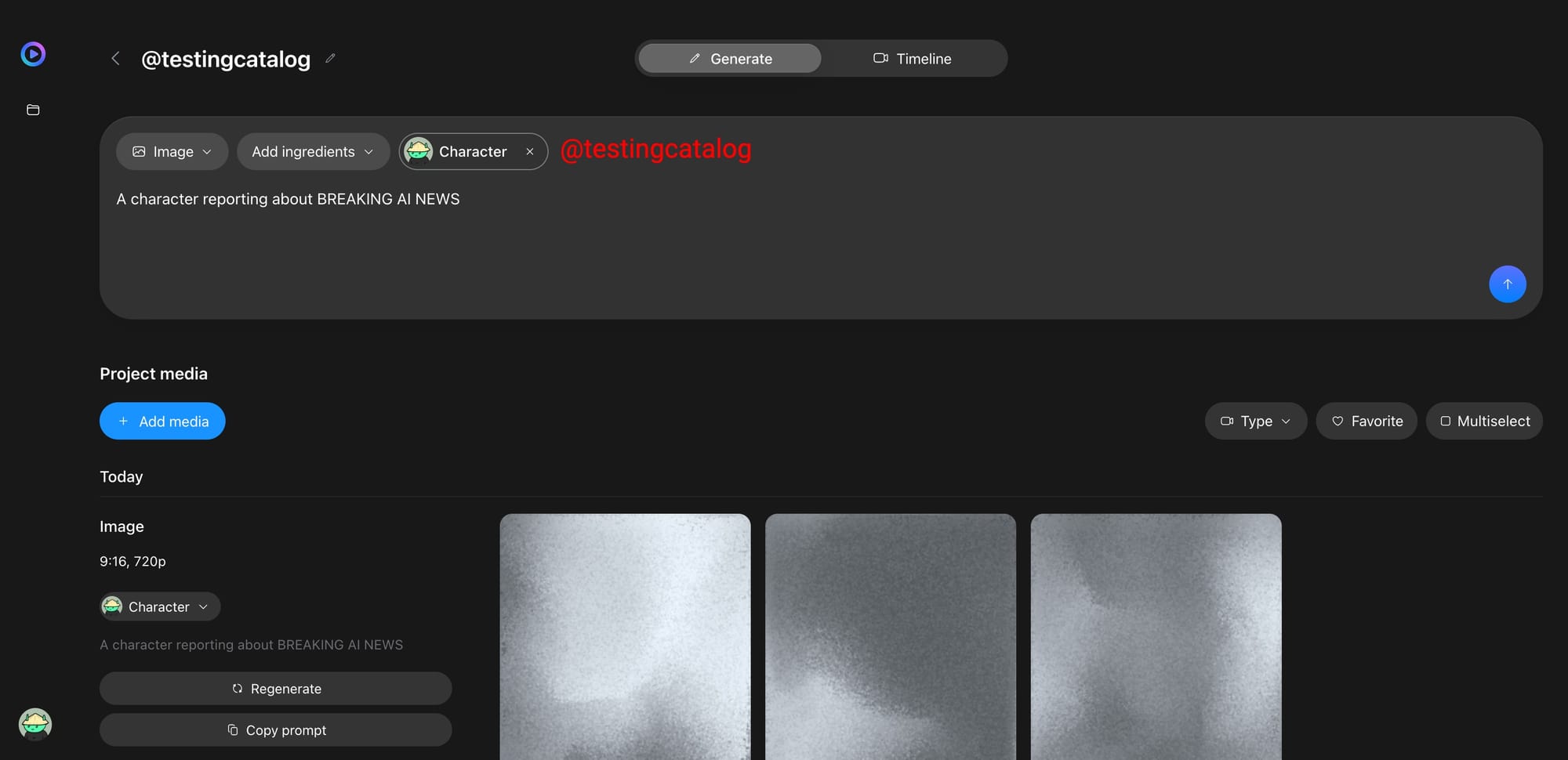

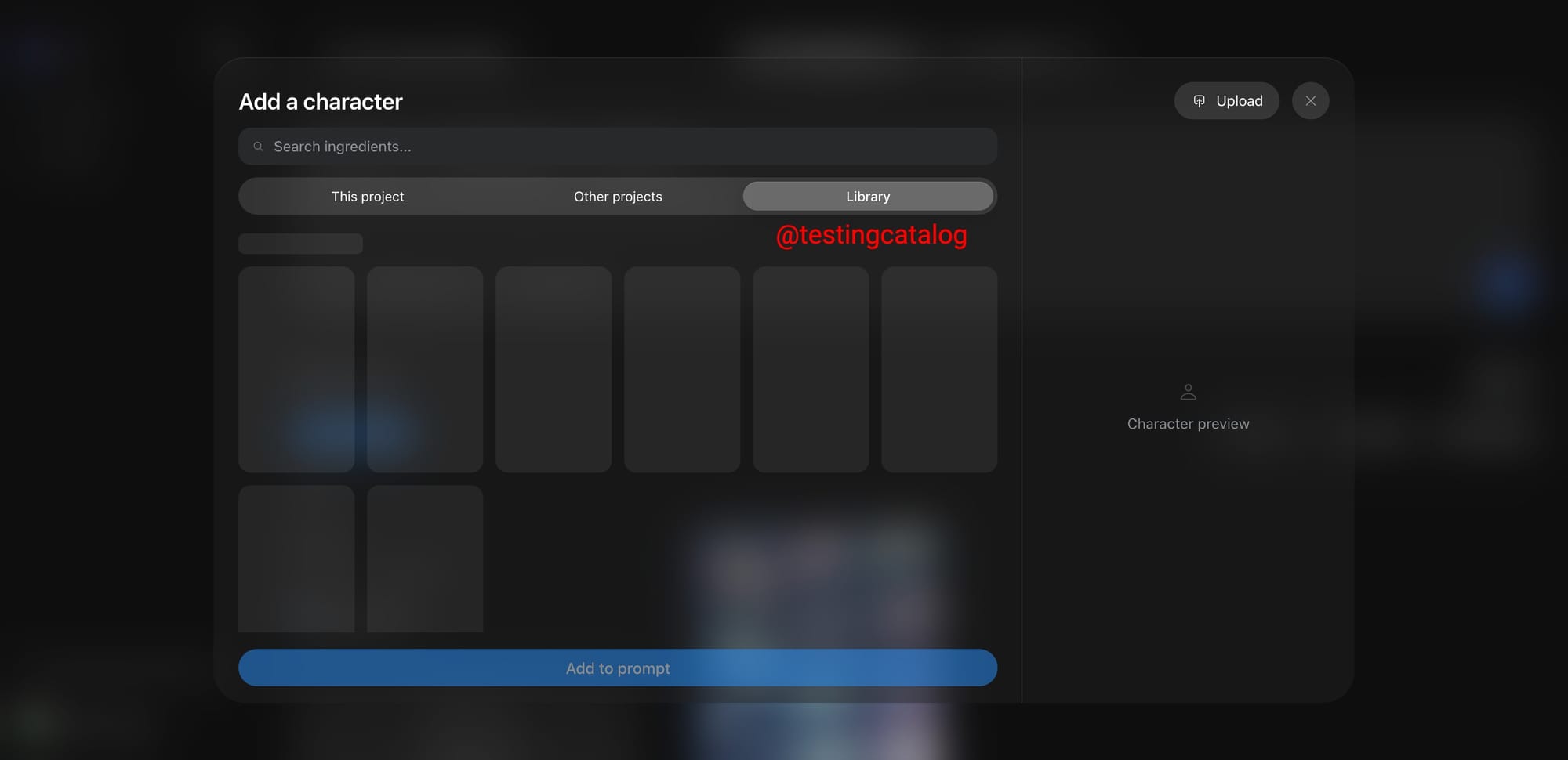

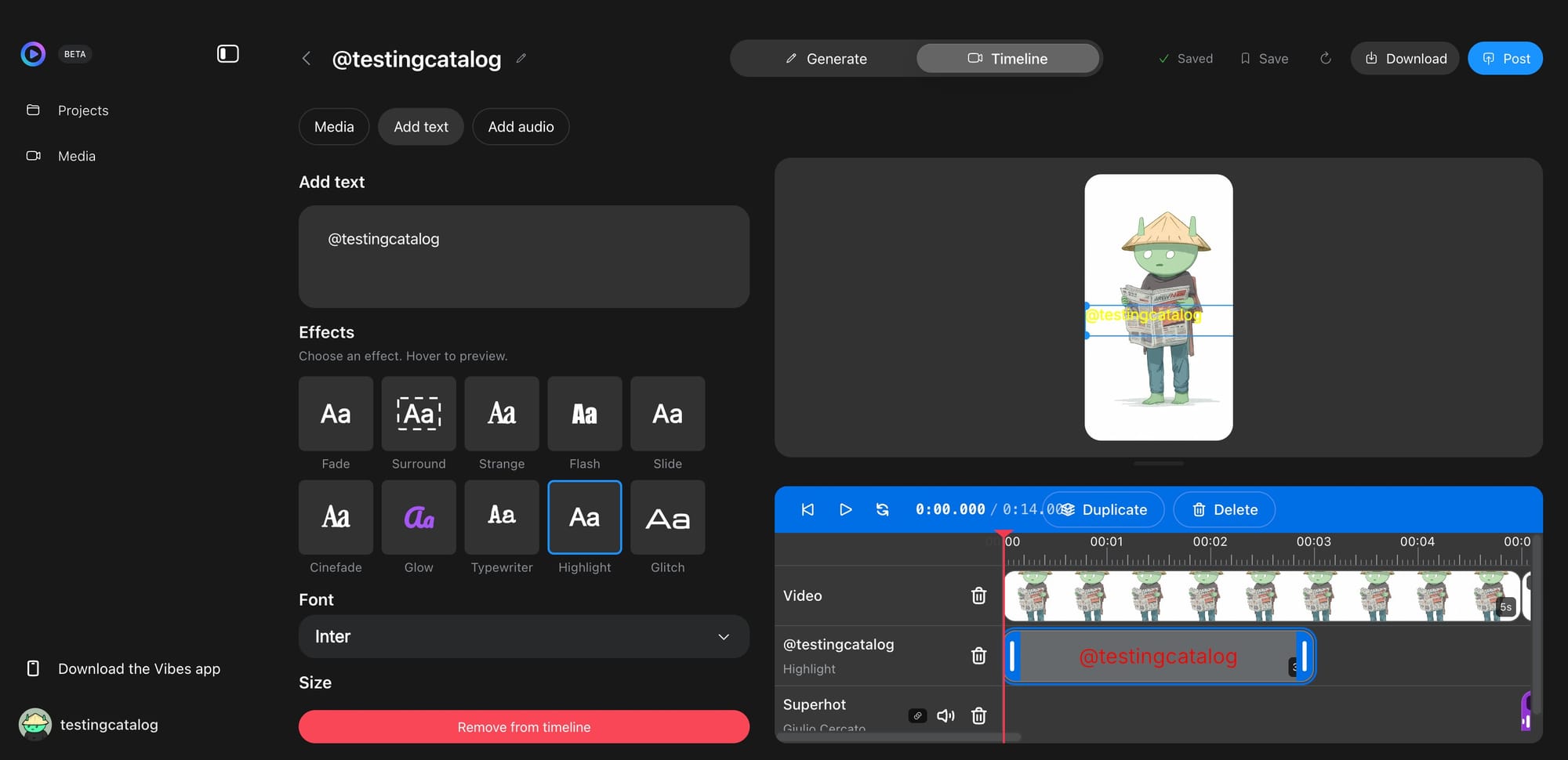

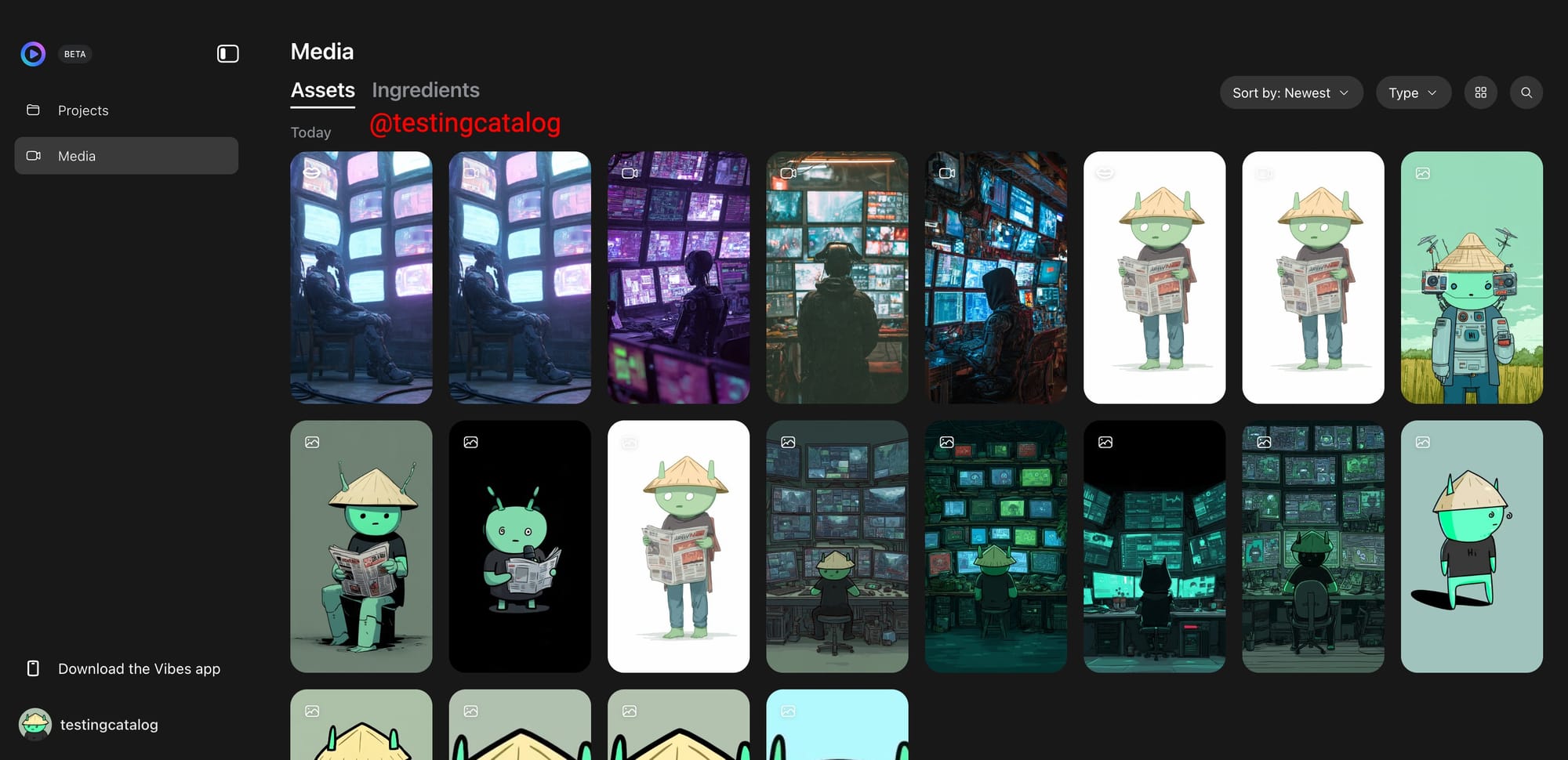

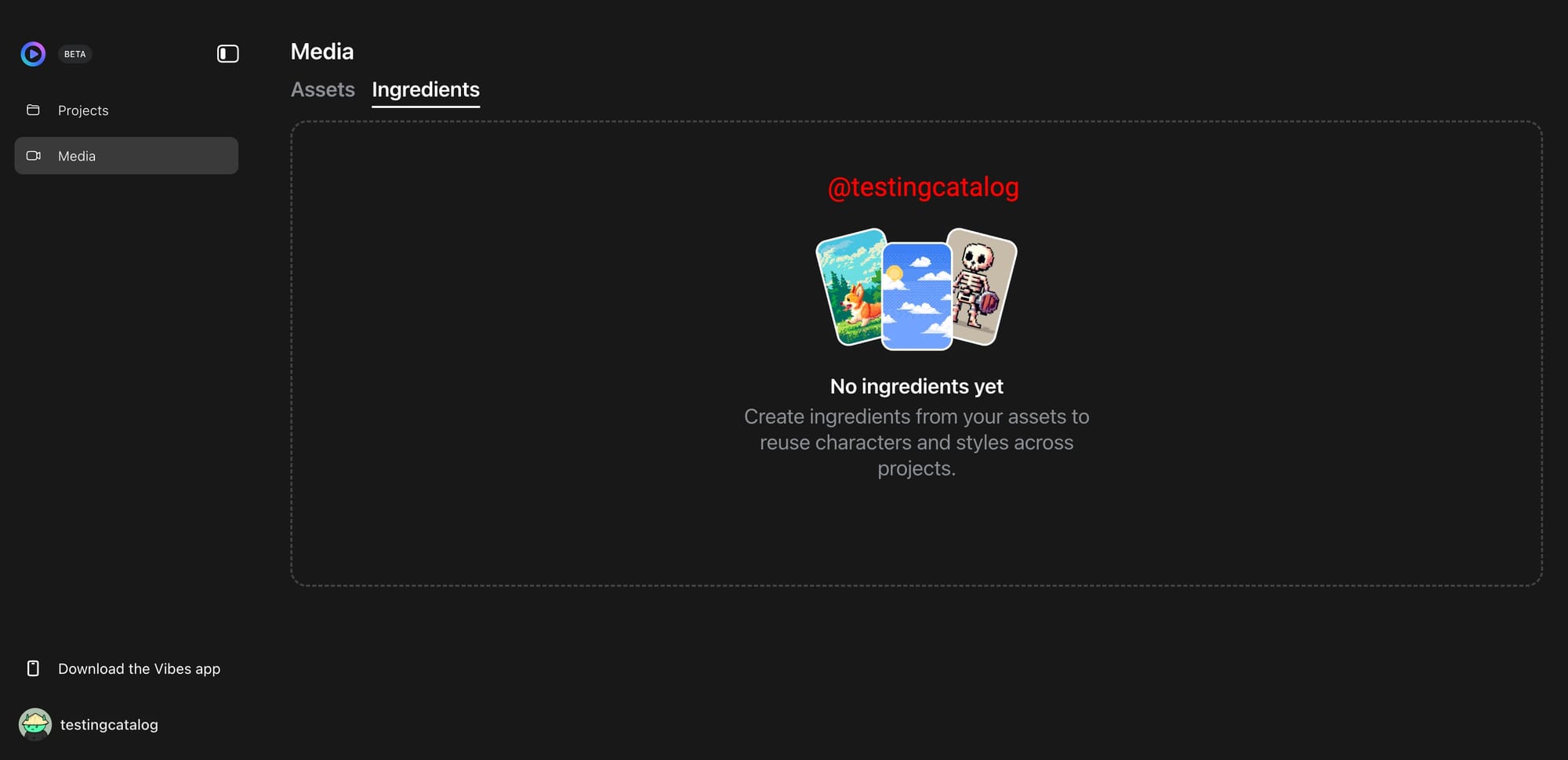

Image and video generation, ingredients, characters, lip sync, start & end frames, music, and timeline editor are supported.

Models are still the same for now 👀 pic.twitter.com/SONdQSN0rJ

This shift is important because it transforms Vibes from a lightweight social feature within Meta AI into a tool resembling Sora or Google Flow, with a project-based workflow designed for creating and refining clips rather than merely prompting once and exporting.

The leaked features indicate that Meta is developing a comprehensive production stack around AI media. The current editor already supports characters, styles, formats, image-to-video animation, music, lip-sync, and voice selection, while the timeline allows for video and audio modifications within the same workspace.

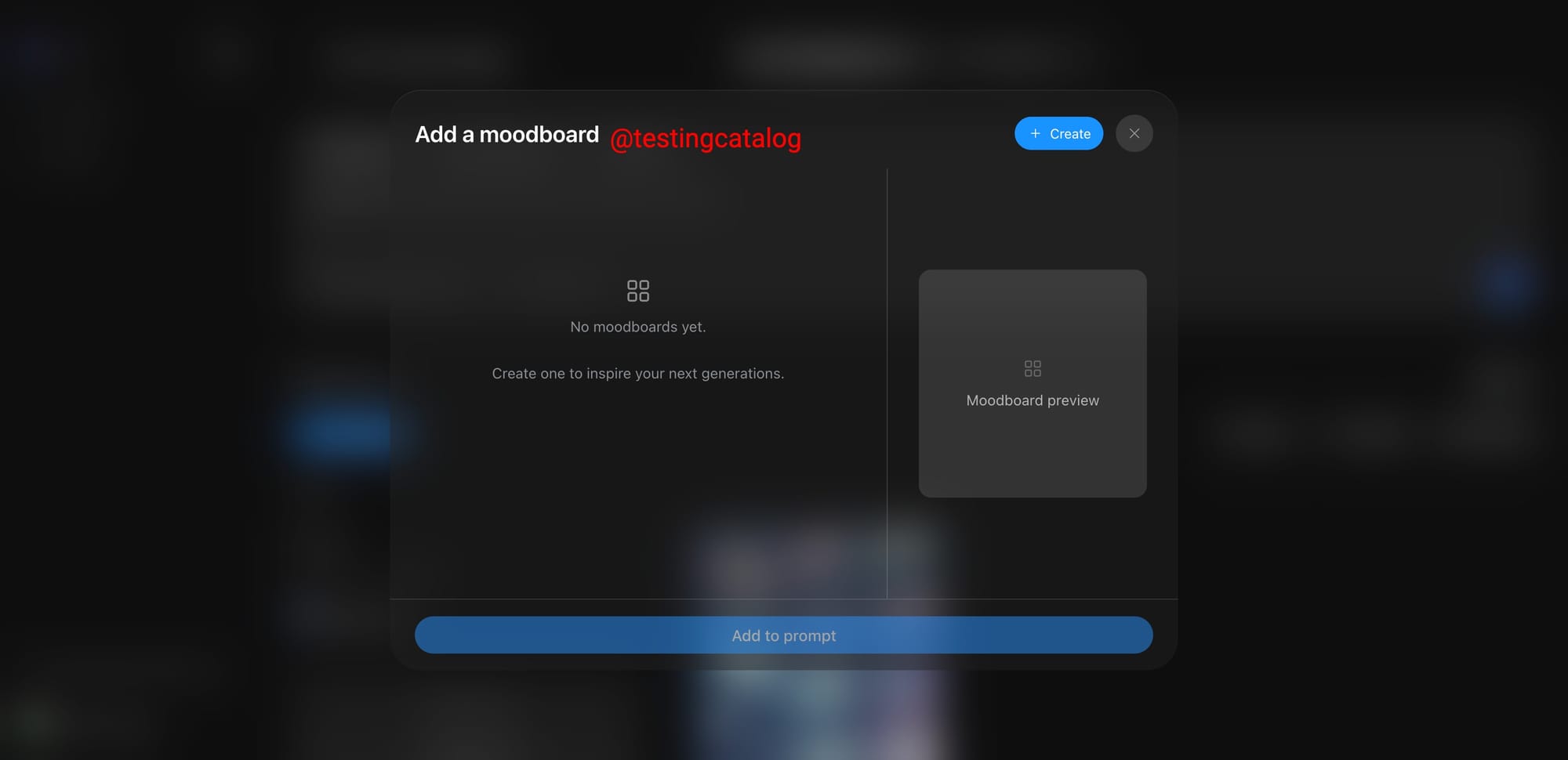

Hidden work on a media tab, moodboards, shared style libraries, character libraries, and timeline text controls suggests that Meta aims for Vibes to encompass the entire process from asset collection to editing and final polish. The main limitation at present is output quality: while the tooling appears mature, the generated results do not consistently meet the interface's ambitious standards.

The company behind it has been advancing AI media across multiple platforms simultaneously. Meta launched Vibes as part of its broader AI initiative, introduced AI video editing across Meta AI and Edits in 2025, and has already showcased Movie Gen as its long-term video model effort. In this context, a standalone Vibes web app makes strategic sense.

It provides creators, marketers, and casual users with a dedicated space to generate short-form videos for Instagram, Facebook, and the broader creator ecosystem, while also offering Meta a direct testing ground for future image and video models. Although the release timing remains uncertain, the product now appears less like an experiment and more like a nearly finished editor being refined for a wider rollout.